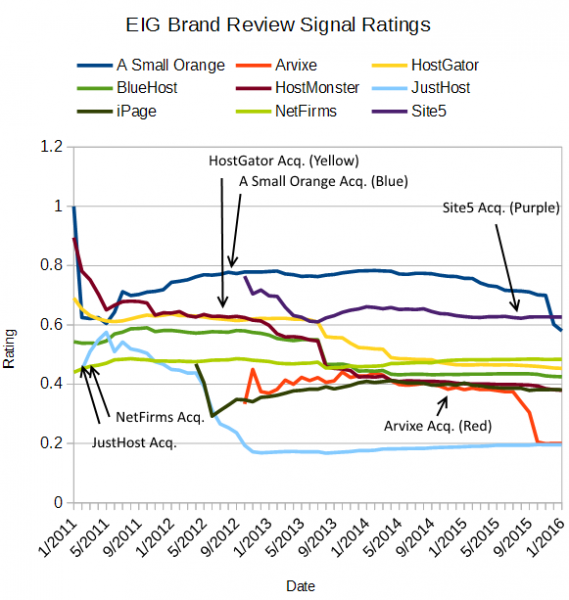

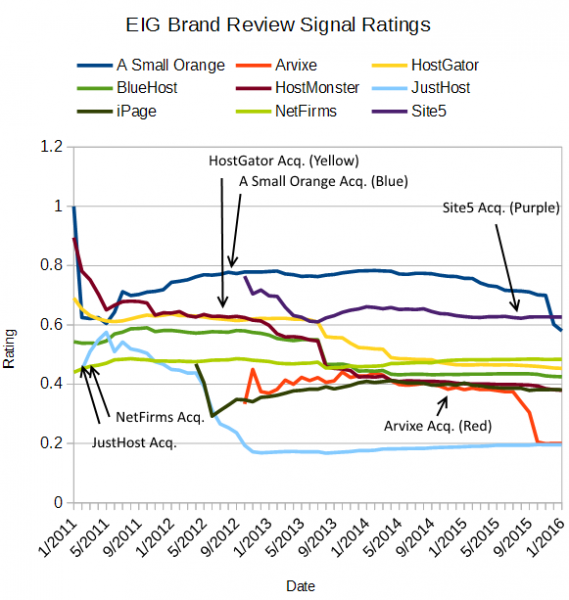

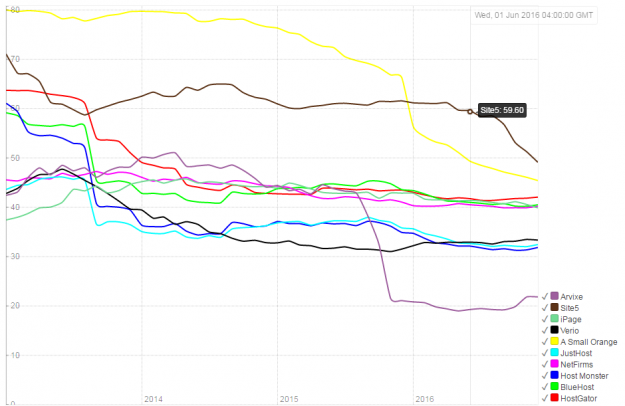

"You'll notice their ratings, in general, are not very good with Site5 (their most recent acquisition) being the exception. iPage was acquired before I started tracking data. BlueHost/HostMonster also had a decline, although the data doesn't start pre-acquisition. JustHost collapses post acquisition. NetFirms has remained consistently mediocre. HostGator collapses with a major outage a year after acquisition. Arvixe collapses a year after being acquired. Site5 is still very recent and hasn't shown any signs of decline yet." - The Rise and Fall of A Small Orange, January 2016

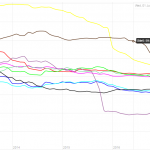

Review Signal Rating Calculated Pos/(Pos+Neg), without duplicate filtering (January 2016)

That's what I wrote at the beginning of 2016 as I watched A Small Orange's rating collapse in a pretty popular post called The Rise and Fall of A Small Orange, which documented ASO's Rise and Fall, but also the fall of many EIG brands. One thing I mentioned was the recent acquisition of Site5 (and Verio) which had a fairly good rating on Review Signal at the time of acquisition. The trend seemed to be roughly a year to see the drop in rating, post acquisition.

Site5 ~ 1 Year Later

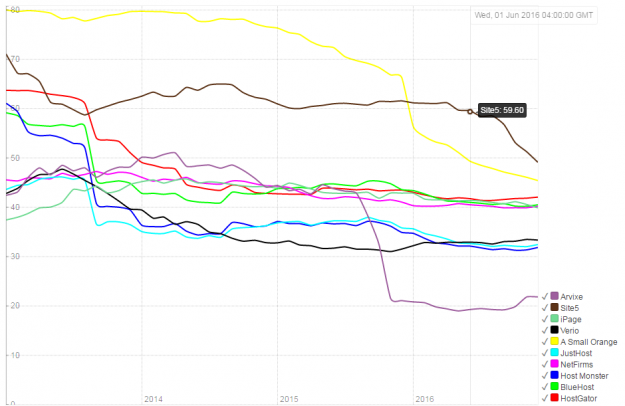

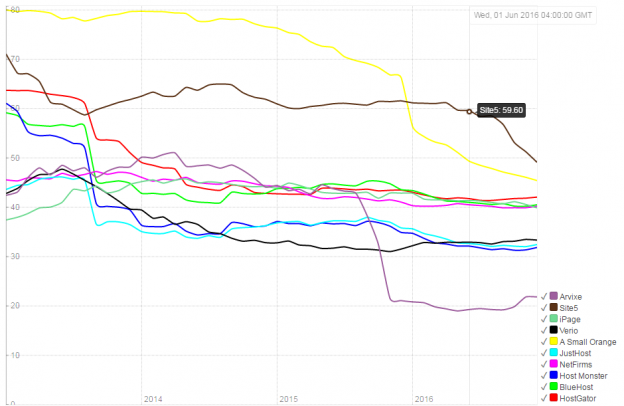

The acquisition of Site5 was announced August 2015. Here's the updated EIG brand tracking graph. One thing to note, this now uses the new rating algorithm which has a built in decay function to weight older reviews less. So the new graph uses the new algorithm but calculating each point in time as if it always used it. There will be some differences between it and the original graph (which prompted the change in algorithm). It's minimal for most brands, only when there is a major change in sentiment, it shows a change more quickly. Full details about the change can be read on Review Signal Ranking Algorithm Update.

What you can see is the reputation remained relatively stable until about April 2016 and then started a slow but steady decline where it has dipped below 50% for the first time recently. As with nearly every brand, except A Small Orange, the decline happened within a year.

Since the original post there also hasn't been much movement in any other brands beyond Site5 crashing and A Small Orange continuing to slide downward. Verio didn't see a dip post-acquisition, but it had a pretty low rating to start with that put it in the bottom half of EIG brand ratings already.

Why Do EIG Brands Go Down Post Acquisition?

The longer I am in this industry, the more stories I hear. A Small Orange was such an interesting exception and I've heard a lot about it from a lot of people. It's relative independence and keeping the staff seemed to be the key to maintaining a good brand even within the EIG conglomerate.

Site5 offers what I imagine is more business-as-usual in the EIG world. Cut staff, migrate to EIG and maximize profit (in the short term). Site5's founder, Ben, reached out to a competitor, SiteGround, and arranged for them to hire a large number of Site5 staff that EIG had no plans on keeping according to SiteGround's blog. A very classy move from the former CEO and a seeming win for SiteGround, one of EIG's larger hosting competitors. I also saw similar behavior of long time staff all leaving when A Small Orange started to go downhill and staff from other EIG brands showed up.

Beyond simply trying to cut costs, you have to wonder why would you spend all that money acquiring these brands that have lots of customers, good reputations and talented staff that obviously are keeping the operation running successfully only to get rid of nearly all of that except the customers. But once you gut the staff, it seems like the customers notice, because it certainly shows up in the data I track.

Conveniently, EIG just published their Q3 2016 10-Q.

We have certain hosting and other brands to which we no longer allocate significant marketing or other funds. These brands generally have healthy free cash flow, but we do not consider them strategic or growth priorities. Subscriber counts for these non-strategic brands are decreasing. While our more strategic brands, in the aggregate, showed net subscriber adds during the quarter ended September 30, 2016, the net subscriber losses in non-strategic brands and certain gateway brands contributed to a decrease in our total subscribers of approximately 42,000 during the quarter. We expect that total subscribers will continue to decrease in the near term.

Overall, our core hosting and web presence business showed relatively slow revenue and subscriber growth during the first nine months of 2016. We believe that this is due to flat marketing expenditures relative to 2015 levels on this business in the first half of 2016 as a result of our focus on gateway products during that period, and to trends in the competitive landscape, including greater competition for referral sources and an increasing trend among consumers to search for web presence and marketing solutions using brand-related search terms rather than generic search terms such as “shared hosting” or “website builder”. We believe this trend assists competitors who have focused more heavily than we have on building consumer awareness of their brand, and that it has made it more challenging and more expensive for us to attract new subscribers. In order to address this trend, during the third quarter of 2016, we began to allocate additional marketing investment to a subset of our hosting brands, including our largest brands, Bluehost.com, HostGator and iPage. We plan to continue this increased level of marketing investment in the near term, and are evaluating different marketing strategies aimed at increasing brand awareness.

So the result of their current strategy this past quarter has been a net loss of 42,000 customers. They say their strategic brands on aggregate had a net subscriber increase and named the largest ones (BlueHost, HostGator, iPage) and they are going to focus on a subset of brands going forward. But the phrasing would seem to imply that some of the strategic brands experienced losses as well. It also means that the non-strategic brands lost more than 42,000 customers and pulled down the net subscribers to -42,000 customers last quarter.

The cap it all off, I got one of the most surprising emails from Site5 a couple days ago.

We wanted to let you know that we’ve decided to terminate the Site5 Affiliate program as of November 30th, 2016.

We want to thank you for your support of Site5, especially during our most recent move into Impact Radius, and we hope that you’ll consider promoting another one of Endurance’s other programs.

I guess Site5 isn't being considered a strategic brand if they are killing off the affiliate channel on it entirely, right after a big migration from Site5's custom affiliate program to Impact Radius. They also asked that affiliates promote HostGator now, which certainly fits in the strategic brand category.

It's extremely disappointing to see this trend continue of brands collapsing after a year in EIG's hands. What will be interesting going forward is that EIG hasn't acquired any new hosting brands for a while. They seem to be focused on their existing brands for now. I wonder if that will mean we will see any noticeable positive change or improvements in existing brands (or at least some of the strategic brands).

WordPress & WooCommerce Hosting Performance Benchmarks 2021

WordPress & WooCommerce Hosting Performance Benchmarks 2021 WooCommerce Hosting Performance Benchmarks 2020

WooCommerce Hosting Performance Benchmarks 2020 WordPress Hosting Performance Benchmarks (2020)

WordPress Hosting Performance Benchmarks (2020) The Case for Regulatory Capture at ICANN

The Case for Regulatory Capture at ICANN WordPress Hosting – Does Price Give Better Performance?

WordPress Hosting – Does Price Give Better Performance? Hostinger Review – 0 Stars for Lack of Ethics

Hostinger Review – 0 Stars for Lack of Ethics The Sinking of Site5 – Tracking EIG Brands Post Acquisition

The Sinking of Site5 – Tracking EIG Brands Post Acquisition Dirty, Slimy, Shady Secrets of the Web Hosting Review (Under)World – Episode 1

Dirty, Slimy, Shady Secrets of the Web Hosting Review (Under)World – Episode 1 Free Web Hosting Offers for Startups

Free Web Hosting Offers for Startups