Endurance International Group (NASDAQ: EIGI) published their 10-K on February 24, 2017.

As one of the biggest players in the space, I like to look through and see what's going on with them.

2016's biggest news for EIG was the acquisition of Constant Contact for 1.1 billion dollars. Their financials have been broken apart now between Web Presence (hosting, domains, etc) and Email (Constant Contact).

It was reinforced early that BlueHost and HostGator are their primary brands and they plan on pushing them with more brand advertising (tv, podcasts, etc). I wonder if we will see a BlueHost superbowl ad to compete with GoDaddy?

In 2015, our total subscriber base increased. In 2016, excluding the effect of acquisitions and adjustments, our total subscriber base was essentially flat, and in our web presence segment, ARPS decreased from $14.18 for 2015 to $13.65 for 2016. We expect that our total subscriber base will decrease in 2017. The factors contributing to our lack of growth in total subscribers and decrease in web presence segment ARPS during 2016 and our expected decrease in total subscribers during 2017 are discussed in “Item 7 - Management’s Discussion and Analysis of Financial Condition and Results of Operations ”. If we are not successful in addressing these factors, including by improving subscriber satisfaction and retention, we may not be able to return to or maintain positive subscriber or revenue growth in the future, which could have a material adverse effect on our business and financial results.

|

Year Ended December 31,

|

|||||||||||

|

2014

|

2015

|

2016

|

|||||||||

|

Consolidated metrics:

|

|||||||||||

|

Total subscribers

|

4,087

|

4,669

|

5,371

|

||||||||

|

Average subscribers

|

3,753

|

4,358

|

5,283

|

||||||||

|

Average revenue per subscriber

|

$

|

13.98

|

$

|

14.18

|

$

|

17.53

|

|||||

|

Adjusted EBITDA

|

$

|

171,447

|

$

|

219,249

|

$

|

288,396

|

|||||

|

Web presence segment metrics:

|

|||||||||||

|

Total subscribers

|

4,827

|

||||||||||

|

Average subscribers

|

4,789

|

||||||||||

|

Average revenue per subscriber

|

$

|

13.65

|

|||||||||

|

Adjusted EBITDA

|

$

|

172,135

|

|||||||||

|

Email marketing segment metrics:

|

|||||||||||

|

Total subscribers

|

544

|

||||||||||

|

Average subscribers

|

494

|

||||||||||

|

Average revenue per subscriber

|

$

|

55.11

|

|||||||||

|

Adjusted EBITDA

|

$

|

116,26

|

|||||||||

Overall, it's probably not a good sign to see Average Revenue Per Subscriber going down on their hosting segment which was the core of the business. The Email segment is hiding/offsetting that a lot.

HostGator, iPage, Bluehost, and our site builder brand) showed positive net subscriber adds in the aggregate during 2016, but these positive net adds were outweighed by the negative impact of subscriber losses in non-strategic hosting brands, our cloud storage and backup solution, and discontinued gateway products such as our VPN product. We expect total subscribers to decrease overall and in our web presence segment during 2017, due primarily to the impact of subscriber churn in these non-strategic and discontinued brands. We expect total subscribers to remain flat to slightly down in our email marketing segment.

The future doesn't look good based on these statements. Decreasing ARPS and decreasing subscriber base seem like a recipe for decline. They don't seem to even expect growth in the email marketing segment. I'm really having a hard time seeing any positive outlook on this.

In 2017, we are focused on improving our product, customer support and user experience within our web presence segment in order to improve our levels of customer satisfaction and retention. If this initiative is not successful, and if we are unable to provide subscribers with quality service, this may result in subscriber dissatisfaction, billing disputes and litigation, higher subscriber churn, lower than expected renewal rates and impairments to our efforts to sell additional products and services to our subscribers, and we could face damage to our reputation, claims of loss, negative publicity or social media attention, decreased overall demand for our solutions and loss of revenue, any of which could have a negative effect on our business, financial condition and operating results.

Our planned transfer of our Bluehost customer support operations to our Tempe, Arizona customer support facility presents a risk to our customer satisfaction and retention efforts in 2017. Although we believe that the move to Tempe will ultimately result in better customer support, the transition may have the opposite effect in the short term. We expect that the transition will take place in stages through the fourth quarter of 2017, and until the transition is complete, we may continue to handle some support calls from our current Orem, Utah customer support center. The morale of our customer support agents in Orem may be low due to the pending closure of the Orem office, and agents may decide to leave for other opportunities sooner than their scheduled departure dates. Either or both of these factors could result in a negative impact on Bluehost customer support, which could lead to subscriber cancellations and harm to our reputation, and generally impede our efforts to improve customer satisfaction and retention in the short term. In addition, we are consolidating our Austin, Texas support operation into our Houston, Texas support center, which could also negatively impact customer support provided from those locations during the transition period.

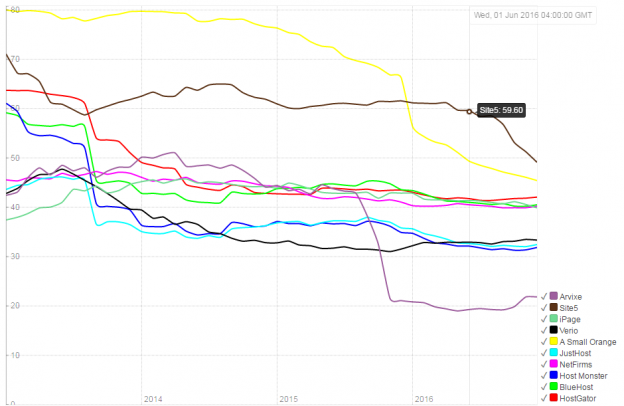

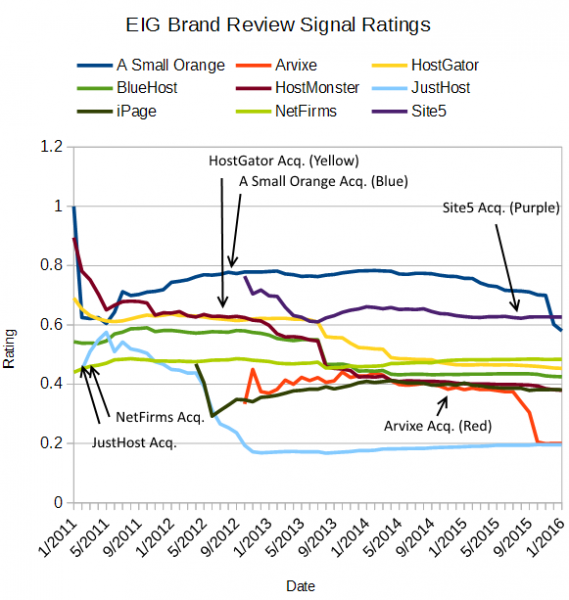

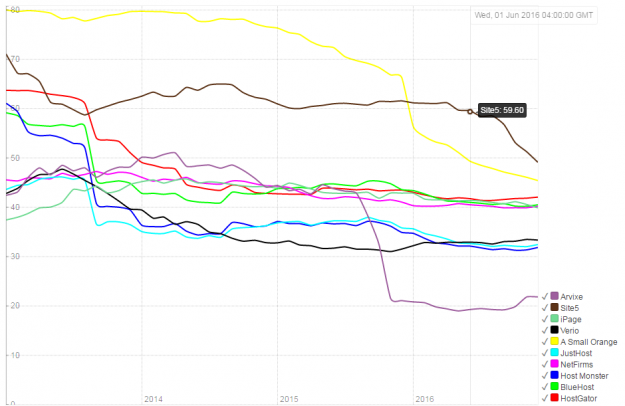

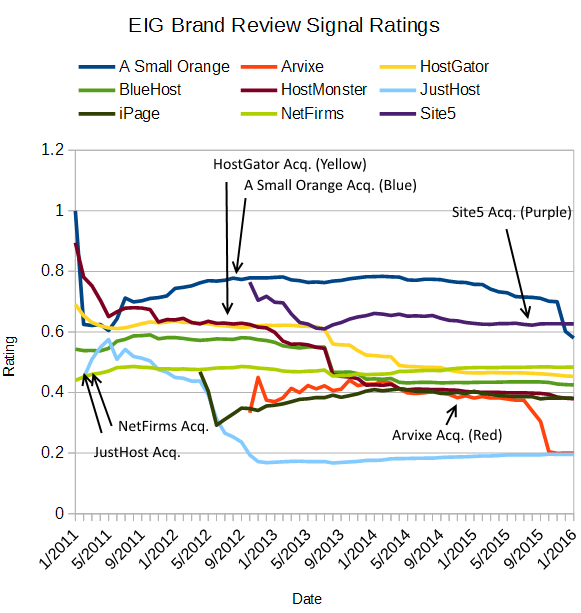

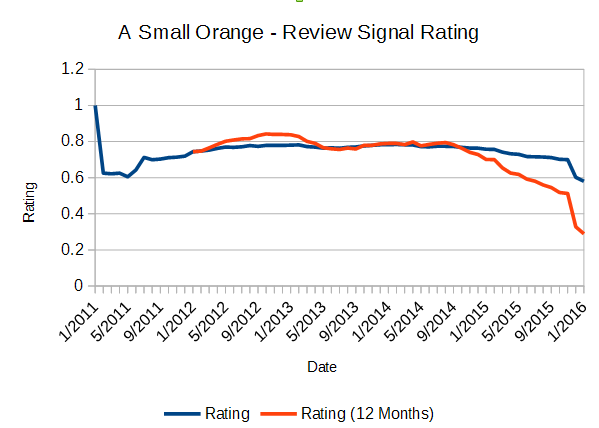

The story about BlueHost getting rid of hundreds of jobs in Orem was widely talked about. It also came up that A Small Orange was getting some of the same treatment. That would be in line with getting rid of Austin where ASO was based. It's interesting to see EIG selling this as a 'long term' move, unless it's entirely a financial one to reduce costs. I've yet to track a single EIG brand substantially increase its rating, but it has destroyed plenty of them (The Rise and Fall of A Small Orange or The Sinking of Site5). These companies they acquired often had much better ratings and knew how to provide customer support.

I did find one interesting bit in the contract with Tregaron India Holdings (Operating as GLOWTOUCH or Daya), the line item for "New hire and ongoing training for all support positions." It makes it sound like this third party company is responsible for training all EIG support staff, along with many other things like migrations. Which have been absolutely disastrous and how Arvixe ended up as one of Review Signal's lowest rated brands which was done by this group.

But who are Tregaron?

The Company has contracts with Tregaron India Holdings, LLC and its affiliates, including Diya Systems (Mangalore) Private Limited, Glowtouch Technologies Pvt. Ltd. and Touchweb Designs, LLC, (collectively, “Tregaron”), for outsourced services, including email- and chat-based customer and technical support, network monitoring, engineering and development support, web design and web building services, and an office space lease. These entities are owned directly or indirectly by family members of the Company’s chief executive officer, who is also a director and stockholder of the Company.

In 2016 EIG spent $14,300,000 with Tregaron. And it wasn't the only business connected to the CEO.

The Company also has agreements with Innovative Business Services, LLC (“IBS”), which provides multi-layered third-party security applications that are sold by the Company. IBS is indirectly majority owned by the Company’s chief executive officer and a director of the Company, each of whom are also stockholders of the Company. During the year ended December 31, 2014, the Company’s principal agreement with this entity was amended which resulted in the accounting treatment of expenses being recorded against revenue.

Another $5,100,000 for this particular company.

So how bad were those migrations?

A key purpose of many of our smaller acquisitions, typically acquisitions of small hosting companies, has been to achieve subscriber growth, cost synergies and economies of scale by migrating customers of these companies to our platform. However, for several of our most recent acquisitions of this type, migrations to our platform have taken longer and been more disruptive to subscribers than we anticipated. If we are unable to improve upon our recent migration efforts and continue to experience unanticipated delays and subscriber disruption from migrations, we may not be able to achieve the expected benefits from these types of acquisitions.

Understatement at its finest.

Overall, things look pretty glum at EIG, which was trading at over $9 on the day this came out and is now under $8/share.

I generally try to keep my opinions fairly limited, but some things need to be called out for the good of consumers. EIG has acquired a lot of talented people and managed to squander them repeatedly. I'm not sure why the company seems to be toxic towards retaining good talent. When EIG are writing statements about trying to improve customer service and have acquired some of the highest rated brands (A Small Orange) Review Signal tracks, and then dismantles them it creates a cognitive dissonance.

Perhaps EIG needs to get rid of the top management. The incestuous relationships between the contracted companies and the CEO are create some questionable incentives. Combined with the objectively poor results from those companies on things like migrations, it seems inexcusable. I'm not optimistic about anything EIG are doing and feel bad for some of the exceptional people I know that still work there.

WordPress & WooCommerce Hosting Performance Benchmarks 2021

WordPress & WooCommerce Hosting Performance Benchmarks 2021 WooCommerce Hosting Performance Benchmarks 2020

WooCommerce Hosting Performance Benchmarks 2020 WordPress Hosting Performance Benchmarks (2020)

WordPress Hosting Performance Benchmarks (2020) The Case for Regulatory Capture at ICANN

The Case for Regulatory Capture at ICANN WordPress Hosting – Does Price Give Better Performance?

WordPress Hosting – Does Price Give Better Performance? Hostinger Review – 0 Stars for Lack of Ethics

Hostinger Review – 0 Stars for Lack of Ethics The Sinking of Site5 – Tracking EIG Brands Post Acquisition

The Sinking of Site5 – Tracking EIG Brands Post Acquisition Dirty, Slimy, Shady Secrets of the Web Hosting Review (Under)World – Episode 1

Dirty, Slimy, Shady Secrets of the Web Hosting Review (Under)World – Episode 1 Free Web Hosting Offers for Startups

Free Web Hosting Offers for Startups