Disclaimer: I own/have owned shares of $DOCN (Digital Ocean). All opinions and analysis aren't financial advice. I attempt to be as impartial as possible, but disclosing the financial relationship is important for transparency.

Cloudways [Reviews] announced their first price increases since 2017 today on their blog, which will take effect April 1, 2023. This is interesting because it's the first major move since Cloudways was acquired by Digital Ocean [Reviews] in August 2022 for a whopping $350 million.

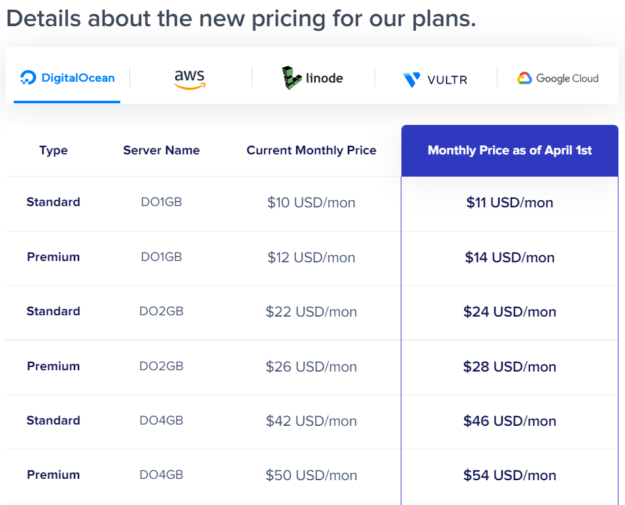

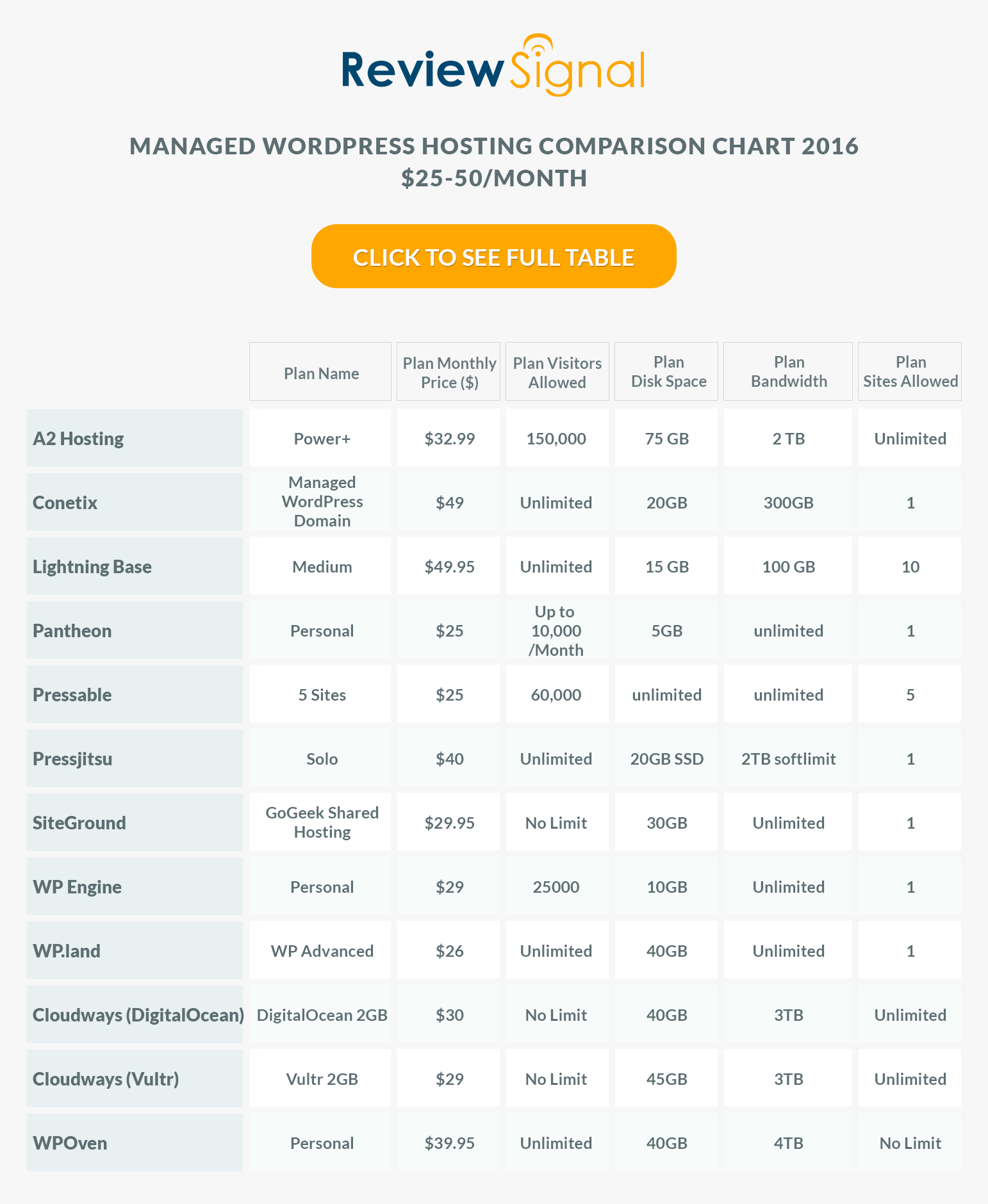

There is a very nice looking chart which shows you all the price changes by cloud provider.

The chart/tool is very easy to understand and clearly shows users the price increases. I appreciate clarity, transparency and notice about the price increase.

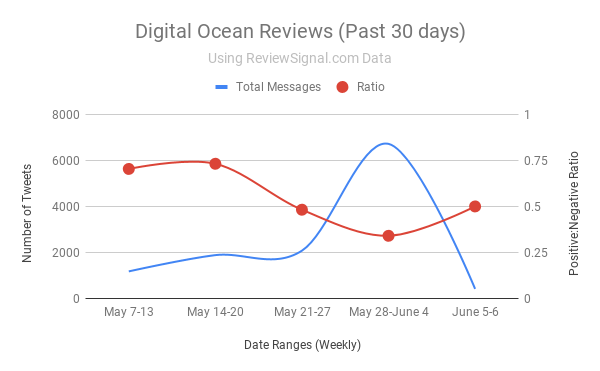

The increase did strike my curiosity though. How were the price increases distributed by cloud provider since Cloudways suddenly has a vested interest in Digital Ocean's success. There are so few publicly traded web hosting companies; so I enjoy analyzing the few there are. Digital Ocean may be the only meaningful publicly traded company in the US (sorry Rackspace, things don't look good looking on the financials and the recent hack doesn't help) that focuses solely on web hosting (Amazon, Google, Microsoft, GoDaddy all compete in multiple spaces beyond hosting). I used to enjoy analyzing Endurance International Group (EIG - currently, Newfold Digital) when their financials were released as they were a pure web hosting play but were taken private a few years ago. Today, I get to analyze Digital Ocean's first move with Cloudways and then look later to see how it actually played out when financials are released. Without further ado...

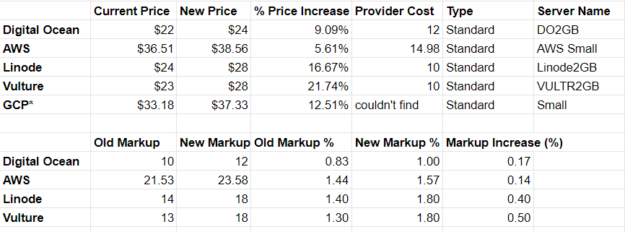

I spent longer than I care to admit creating the following spreadsheet. I wanted to know how much the providers charge, how much is being charged now for the instance and how much it's going up by in absolute and percentage terms.

The results were inline with what I expected. Digital Ocean, especially at the lower tiers, is getting an incredibly favorable deal versus its competitors.

If we look at 2GB ram instances across providers we would see the following:

What's interesting is that Digital Ocean seems to have had the best deal before the price increases by a significant margin. The 2GB instance is more expensive ($12) at the provider than Linode/Vulture ($10) and is selling cheaper by $1-2 on Cloudways before the price increases. The markup difference is 83% for DO and 130-140% for Vultr and Linode respectively.

After the price increases the difference is even more pronounced with Linode and Vultr ending at the same 180% markup while Digital Ocean is only at 100%.

I became even more curious. Amazon and Google don't seem like the biggest direct competitors to Digital Ocean, they have much higher pricing already. I suspect if you're picking Amazon/Google, you're picking them for the brand already, not the price to performance ratio. Linode and Vultr definitely have been comparable services to Digital Ocean for a long time. How did markups vary between them?

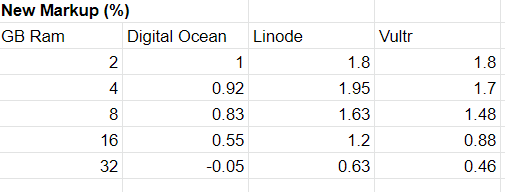

Digital Ocean vs Linode vs Cloudways - New Markup %

At the 2GB level Linode and Vultr were standardized in the price increase, but the differences afterwards are interesting. Linode actually becomes more marked up for the next tier up. Afterwards they all decrease in markup %. It's interesting to note Vultr's markup goes down more than Linode despite starting equally at the 2GB price tier.

Digital Ocean's markup goes negative at the 32GB tier (and the plan itself offers vastly more resources than the publicly listed plan in terms of storage and data transfer). So it's actually cheaper to get a 32GB plan through Cloudways than buying direct at Digital Ocean. That is certainly one way to attract new higher value customers to the platform.

Analysis

After spending time looking at the data, the price increases would seem to indicate a clear message. Digital Ocean owns Cloudways. It's making itself the most attractive option on the platform, even offering better deals than they offer direct in some cases. That is exactly what I expected after the acquisition, Digital Ocean should be leveraging Cloudways to increase it's value. They would be capturing margin on both the underlying infrastructure provided by Digital Ocean and from the management/services layer offered by Cloudways.

The concern as a consumer would be getting pushed towards certain infrastructure providers by pricing options controlled by the middleman instead of the infrastructure providers themselves. Does this open the door for more widely for competitors to step in and offer management/service layers that are multicloud with less pricing inequality? Are most consumers already price conscious and using Digital Ocean through Cloudways?

As an investor, I would have similar concerns about what it would do for the brand and competition. I thought the acquisition of Cloudways was smart by Digital Ocean (perhaps maybe not the purchase price, but the company itself makes sense to own). It meant for every customer on Cloudways, Digital Ocean would be getting a cut - even from customers using their competitors. That seemed like a great model. If Digital Ocean puts a slight incentive to use them as the infrastructure provider as well, it makes even more sense. I just hope the price point keeps them competitive as an option on the other clouds so they can continue to take a cut of every hosting transaction regardless of the cloud provider versus becoming solely a funnel for Digital Ocean products.

The price increase timing also indicates Digital Ocean, the publicly traded company, which recently went through a round of layoffs, likely needs to become profitable as it recorded a loss last quarter. It has only recorded a profit once, the quarter before last. With rising interest rates and tech stocks collapsing, it probably isn't the best time to be growing by taking on more debt and running at a loss. We've seen a lot of providers raising rates lately using justifications of energy costs, inflation and probably other reasons. It's probably a reasonable time to pull the trigger on the increase given the macroeconomic circumstances.

Cloudways was expected to generate roughly 50 million in revenue in 2022, so adding a 5-10% increase in revenue would be 2.5-5 million on an annual basis or 625k-1.25m on a quarterly basis. Considering Digital Ocean had a loss of 10m last quarter, that could make up over 10% of the shortfall from being profitable. A not insignificant chunk.

I don't know what the math looks like behind the scenes. I am just doing napkin math and using a bit of intuition. I am sure someone calculated and estimated the impact of the price increases and how they were applied. I will be interested to see the earnings each quarter for the next few months to measure the impact. I also wonder if Cloudways revenue will be broken out separately or not. Hosting is often quite an inelastic product, it's a pain to move and change. If someone calculated everything correctly, this could be great at helping Digital Ocean return to profitability in the short to medium term. I look forward to seeing how Digital Ocean performs in the future.

WordPress & WooCommerce Hosting Performance Benchmarks 2021

WordPress & WooCommerce Hosting Performance Benchmarks 2021 WooCommerce Hosting Performance Benchmarks 2020

WooCommerce Hosting Performance Benchmarks 2020 WordPress Hosting Performance Benchmarks (2020)

WordPress Hosting Performance Benchmarks (2020) The Case for Regulatory Capture at ICANN

The Case for Regulatory Capture at ICANN WordPress Hosting – Does Price Give Better Performance?

WordPress Hosting – Does Price Give Better Performance? Hostinger Review – 0 Stars for Lack of Ethics

Hostinger Review – 0 Stars for Lack of Ethics The Sinking of Site5 – Tracking EIG Brands Post Acquisition

The Sinking of Site5 – Tracking EIG Brands Post Acquisition Dirty, Slimy, Shady Secrets of the Web Hosting Review (Under)World – Episode 1

Dirty, Slimy, Shady Secrets of the Web Hosting Review (Under)World – Episode 1 Free Web Hosting Offers for Startups

Free Web Hosting Offers for Startups