Sponsored by LoadStorm. The easy and cost effective load testing tool for web and mobile applications.

This is the third round of managed WordPress web hosting performance testing. You can see the original here, and the November 2014 version here.

New (9/14/2016) The 2016 WordPress Hosting Performance Benchmarks are live.

New (8/20/2015) This post is also available as an Infographic.

Companies Tested

A Small Orange [Reviews]

BlueHost [Reviews]

CloudWays [Reviews]

DreamHost [Reviews]

FlyWheel [Reviews]

GoDaddy [Reviews]

Kinsta

LightningBase

MediaTemple [Reviews]

Nexcess

Pagely [Reviews]

Pantheon [Reviews]

Pressidium

PressLabs

SiteGround† [Reviews]

WebHostingBuzz

WPEngine* [Reviews]

WPOven.com

WPPronto

Note: Pressable and WebSynthesis [Reviews] were not interested in being tested this round and were excluded. WordPress.com dropped out due to technical difficulties in testing their platform (a large multi-site install).

Every company donated an account to test on. All were the WordPress specific plans (e.g. GoDaddy's WordPress option). I checked to make sure I was on what appeared to be a normal server. The exception is WPEngine*. They wrote that I was "moved over to isolated hardware (so your tests don’t cause any issues for other customers) that is in-line with what other $29/month folks use." From my understanding, all testing was done on a shared plan environment with no actual users on the server to share. So this is almost certainly the best case scenario performance wise, so I suspect the results look better than what most users would actually get.

†Tests were performed with SiteGround's proprietary SuperCacher module turned on fully with memcached.

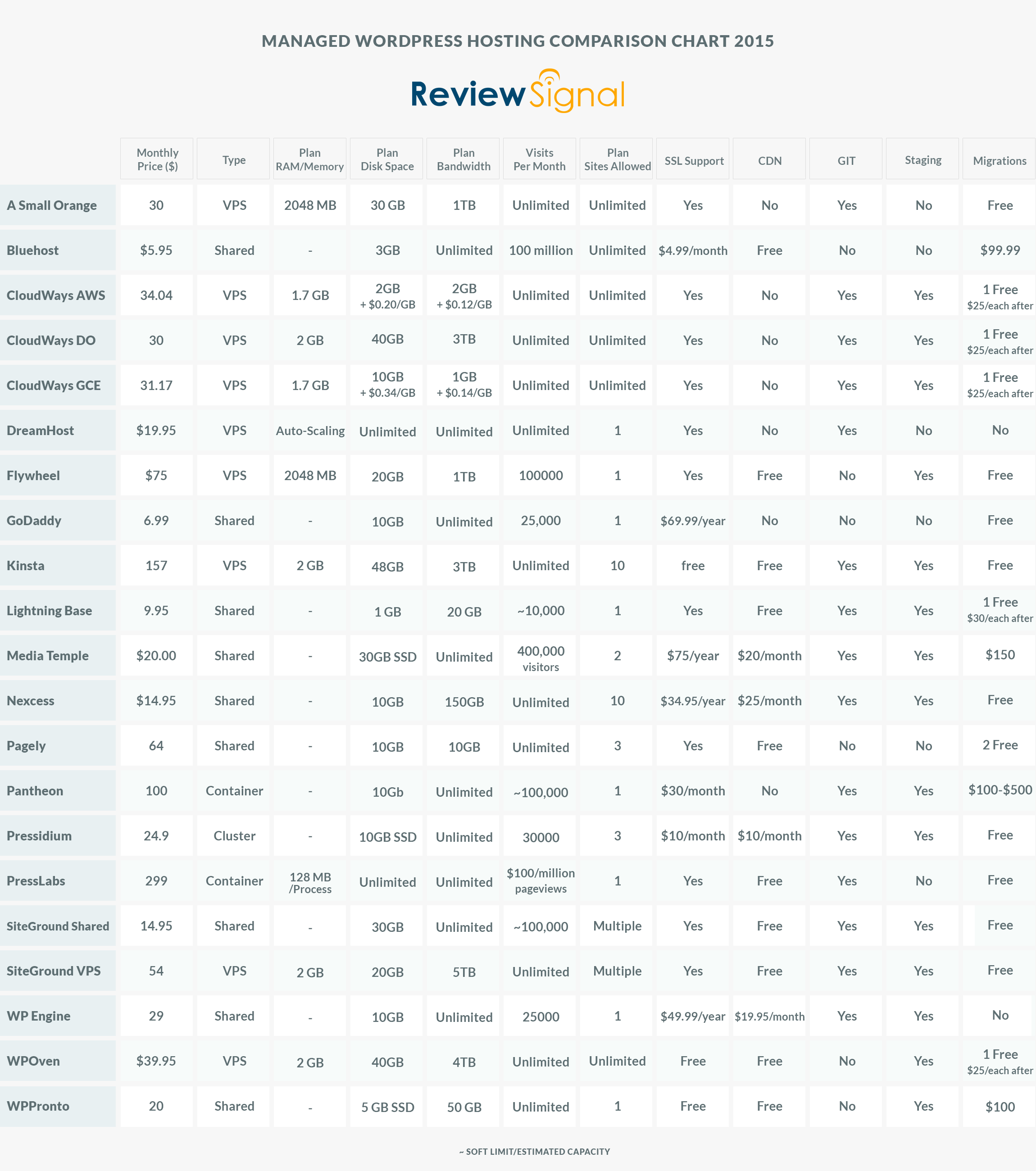

The Products (Click for Full-Size Image)

Methodology

The question I tried to answer is how well do these WordPress hosting services perform? I tested each company on two distinct measures of performance: peak performance and consistency. I've also included a new and experimental compute and database benchmark. Since it is brand new, it has no bearing on the results but is included for posterity and in the hope that it will lead to another meaningful benchmark in the future.

All tests were performed on an identical WordPress dummy website with the same plugins except in cases where hosts added extra plugins. Each site was monitored for over a month for consistency.

1. LoadStorm

LoadStorm was kind enough to give me unlimited resources to perform load testing on their platform and multiple staff members were involved in designing and testing these WordPress hosts. I created identical scripts for each host to load a site, login to the site and browse the site. I tested every company up to 2000 concurrent users. Logged in users were designed to break some of the caching and better simulate real user load.

2. Blitz.io

I used Blitz again to compare against previous results. Since the 1000 user test wasn't meaningful anymore, I did a single test for 60 seconds, scaling from 1-2000 users.

3. Uptime (UptimeRobot and StatusCake)

Consistency matters. I wanted to see how well these companies performed over a longer period of time. I used two separate uptime monitoring services over the course of a month to test consistency.

"WebPagetest is an open source project that is primarily being developed and supported by Google as part of our efforts to make the web faster." WebPageTest grades performance and allows you to run tests from multiple locations simulating real users. I tested from Dulles, VA, Miami, FL, Denver, CO, and Los Angeles, CA.

I created a WordPress plugin to benchmark CPU, MySql and WordPress DB performance. It is based on a PHP benchmark script I forked (available on GitHub) and adapted to WordPress. The CPU/MySql benchmarks are testing the compute power. The WordPress component tests actually calling $wpdb and executing insert, select, update and delete queries. This plugin will be open sourced once I clean it up and make it usable for someone beyond myself.

Background Information

Before I go over the results I wanted to explain and discuss a few things. Every provider I tested had the latest version of WordPress installed. I had to ask a lot of companies to disable some security features to perform accurate load tests. Those companies were: DreamHost, Kinsta, LightningBase, Nexcess, Pagely, Pressidium, PressLabs, SiteGround, and WPEngine.

Every company that uses a VPS based platform were standardized around 2GB of memory for their plan (or equivalent) in an effort to make those results more comparable. The exception is DreamHost which uses a VPS platform but uses multiple scaling VPSs.

CloudWays's platform that lets you deploy your WordPress stack to multiple providers: Digital Ocean, Amazon (AWS)'s EC2 servers or Google Compute Engine. I was given a server on each platform of near comparable specs (EC2 Small 1.7GB vs Digital Ocean 2GB vs GCE 1.7GB g1 Small). So CloudWays is listed as CloudWays AWS, CloudWays DO, CloudWays GCE to indicate which provider the stack was running on.

SiteGround contributed a shared and VPS account designated by the Shared or VPS after it.

Results

Load Storm

Since last round didn't have any real issues until 1000 users I skipped all the little tests and began with 100-1000 users. I also did the 500-2000 user test on every company instead of simply disqualifying companies. I ran these tests with an immense amount of help from Phillip Odom at LoadStorm. He spent hours with me, teaching me how to use LoadStorm more effectively, build tests and offering guidance/feedback on the tests themselves.

Test 1. 100-1000 Concurrent Users over 30 minutes

| Name of Test | Total Requests | Peak RPS | Average RPS | Peak Response Time(ms) | Average Response Time(ms) | Total Data Transferred(GB) | Peak Throughput(kB/s) | Average Throughput(kB/s) | Total Errors |

| A Small Orange | 114997 | 90.27 | 61.83 | 1785 | 259 | 2.41 | 1878.14 | 1295.82 | 0 |

| BlueHost | 117569 | 93.62 | 63.21 | 15271 | 2522 | 5.41 | 4680.6 | 2909.16 | 23350 |

| CloudWays AWS | 138176 | 109.1 | 74.29 | 15086 | 397 | 7.15 | 6016.88 | 3844.49 | 44 |

| CloudWays DO | 139355 | 109.88 | 74.92 | 2666 | 321 | 7.21 | 5863.82 | 3876.3 | 0 |

| CloudWays GCE | 95114 | 76.22 | 52.84 | 15220 | 7138 | 3.63 | 3247.38 | 2014.92 | 23629 |

| DreamHost | 143259 | 113.57 | 77.02 | 15098 | 314 | 7.1 | 6136.75 | 3815.73 | 60 |

| FlyWheel | 128672 | 101.98 | 69.18 | 9782 | 571 | 7 | 6197.92 | 3764.6 | 333 |

| GoDaddy | 134827 | 104.6 | 72.49 | 15084 | 352 | 7.49 | 6368.32 | 4028.45 | 511 |

| Kinsta | 132011 | 102.98 | 70.97 | 3359 | 229 | 7.35 | 6078.95 | 3951.75 | 0 |

| LightningBase | 123522 | 100.73 | 68.62 | 4959 | 308 | 6.53 | 5883.15 | 3626.2 | 4 |

| MediaTemple | 134278 | 105.72 | 74.6 | 15096 | 363 | 7.45 | 6397.68 | 4140.7 | 640 |

| Nexcess | 131422 | 104.47 | 70.66 | 7430 | 307 | 7.17 | 6256.08 | 3854.27 | 0 |

| Pagely | 87669 | 70.8 | 47.13 | 7386 | 334 | 5.75 | 5090.11 | 3091.06 | 3 |

| Pantheon | 135560 | 106.42 | 72.88 | 7811 | 297 | 7.24 | 5908.27 | 3890.83 | 0 |

| Pressidium | 131234 | 103.03 | 70.56 | 7533 | 352 | 7.23 | 6092.36 | 3889.64 | 0 |

| PressLabs | 132931 | 107.43 | 71.47 | 10326 | 306 | 3.66 | 3264.02 | 1968.98 | 0 |

| SiteGround Shared | 137659 | 111.35 | 74.01 | 7480 | 843 | 6.85 | 5565.02 | 3683.04 | 111 |

| SiteGround VPS | 130993 | 103.45 | 70.43 | 15074 | 310 | 7.17 | 6061.82 | 3855.86 | 19 |

| WebHostingBuzz | |||||||||

| WPEngine | 148744 | 117.15 | 79.97 | 15085 | 206 | 7.32 | 6224.06 | 3935.35 | 4 |

| WPOven.com | 112285 | 96.58 | 60.37 | 15199 | 2153 | 5.78 | 5680.23 | 3108.94 | 5594 |

| WPPronto | 120148 | 99.08 | 64.6 | 15098 | 681 | 5.61 | 4698.51 | 3018.33 | 19295 |

Discussion of Load Storm Test 1 Results

Most companies were ok with this test, but a few didn't do well: BlueHost, CloudWays GCE, WPOven and WPPronto. FlyWheel, GoDaddy and Media Temple had a couple spikes but nothing too concerning. I was actually able to work with someone at DreamHost this time and bypass their security features and their results look better than last time. I am also excited that we got PressLabs working this time around after the difficulties last round.

In general, the 1000 user test isn't terribly exciting, 7/21 companies got perfect scores with no errors. Another 6 didn't have more than 100 errors. Again, this test pointed out some weak candidates but really didn't do much for the upper end of the field.

Test 2. 500 - 2000 Concurrent Users over 30 Minutes

Note: Click the company name to see full test results.

| Total Requests | Peak RPS | Average RPS | Peak Response Time(ms) | Average Response Time(ms) | Total Data Transferred(GB) | Peak Throughput(kB/s) | Average Throughput(kB/s) | Total Errors | |

| A Small Orange | 242965 | 181.62 | 130.63 | 15078 | 411 | 5.09 | 3844.54 | 2737 | 1 |

| BlueHost | 201556 | 166.83 | 111.98 | 15438 | 8186 | 5.32 | 5229.07 | 2953.17 | 93781 |

| CloudWays AWS | 261050 | 195.23 | 145.03 | 15245 | 2076 | 13.13 | 9685.95 | 7296.4 | 11346 |

| CloudWays DO | 290470 | 218.17 | 161.37 | 15105 | 532 | 14.87 | 12003.3 | 8262.77 | 1189 |

| CloudWays GCE | 193024 | 147.22 | 107.24 | 15168 | 8291 | 4.72 | 4583.86 | 2622.85 | 93821 |

| DreamHost | 303536 | 232.27 | 163.19 | 15100 | 442 | 14.95 | 12619.67 | 8039.54 | 210 |

| FlyWheel | 253801 | 202.15 | 136.45 | 15218 | 1530 | 11.26 | 9939.17 | 6052.49 | 56387 |

| GoDaddy | 283904 | 221.12 | 152.64 | 15025 | 356 | 15.74 | 13731.97 | 8460.12 | 1432 |

| Kinsta | 276547 | 214.93 | 148.68 | 15025 | 573 | 15.16 | 13444.75 | 8151.37 | 1811 |

| LightningBase | 263967 | 211.12 | 141.92 | 7250 | 330 | 13.82 | 13061.01 | 7429.91 | 18 |

| MediaTemple | 286087 | 223.93 | 153.81 | 15093 | 355 | 15.83 | 14532.42 | 8512.11 | 1641 |

| Nexcess | 277111 | 207.73 | 148.98 | 15087 | 548 | 15 | 12313.29 | 8066.37 | 359 |

| Pagely | 181740 | 148.18 | 97.71 | 11824 | 791 | 11.82 | 10592.21 | 6355.09 | 1 |

| Pantheon | 287909 | 223.02 | 154.79 | 15039 | 276 | 15.28 | 13831.45 | 8217.49 | 3 |

| Pressidium | 278226 | 208.55 | 149.58 | 15044 | 439 | 15.28 | 12453.66 | 8213.63 | 12 |

| PressLabs | 280495 | 214.07 | 150.8 | 8042 | 328 | 7.66 | 6267.46 | 4118.34 | 0 |

| SiteGround Shared | 301291 | 231.93 | 161.98 | 15052 | 557 | 14.76 | 12799.09 | 7934.03 | 1837 |

| SiteGround VPS | 279109 | 209.67 | 150.06 | 12777 | 374 | 15.21 | 12506.79 | 8178.5 | 20 |

| WebHostingBuzz | |||||||||

| WPEngine | 316924 | 241.67 | 170.39 | 7235 | 285 | 15.52 | 12989.23 | 8341.47 | 3 |

| WPOven.com | 213809 | 169.97 | 118.78 | 15268 | 4442 | 8.81 | 7153.5 | 4894.98 | 35292 |

| WPPronto | 258092 | 206.53 | 143.38 | 15246 | 539 | 10.85 | 9483.74 | 6026.26 | 76276 |

Discussion of Load Storm Test 2 Results

The previous companies that struggled ( BlueHost, CloudWays GCE, WPOven and WPPronto) didn't improve, which is to be expected. FlyWheel which had a few spikes ran into more serious difficulties and wasn't able to withstand the load. CloudWays AWS ended up failing, but their Digital Ocean machine spiked but was able to handle the load.

The signs of load were much more apparent this round with a lot more spikes from many more companies. GoDaddy and Media Temple who also had spikes in the first test, had spikes again but seemed to be able to withstand the load. Kinsta spiked early but was stable for the duration of the test. SiteGround Shared had a steady set of small spikes but didn't fail.

Nobody had the same level of perfection as last time with no spike in response times. Only one company managed an error-less run this time (PressLabs) but many achieved similar results, like A Small Orange went from 0 errors to 1, Pantheon went from 0 to 3 and Pagely had only 1 error, again.

The biggest change that occurred was WPEngine. It went from failing on the 1000 user test to having one of the better runs in the 2000 user test. I have to emphasize it was a shared plan on isolated hardware though with no competition for resources.

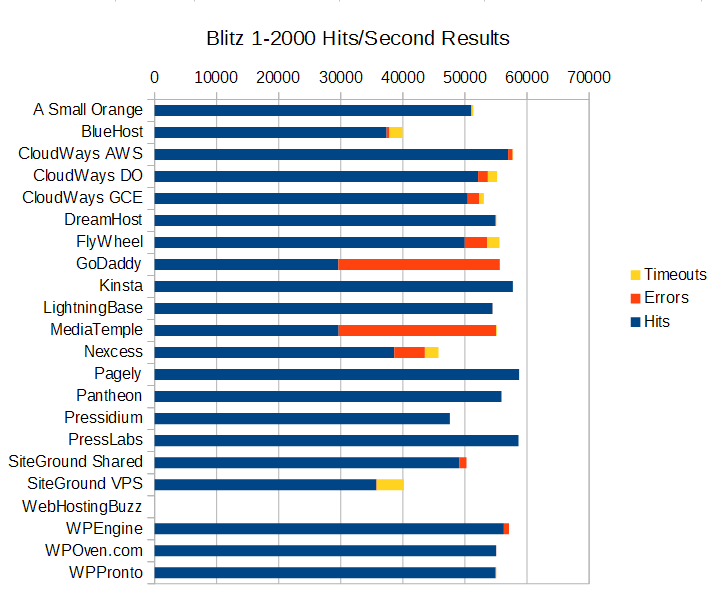

Blitz.io

Test 1. 1-2000 Concurrent Users over 60 seconds

Blitz Test 1. Quick Results Table

Note: Click the company name to see full test results.

| Company | Hits | Errors | Timeouts | Average Hits/Second | Average Response Time | Fastest Response | Slowest Response |

| A Small Orange | 51023 | 56 | 280 | 850 | 115 | 72 | 285 |

| BlueHost | 37373 | 475 | 2102 | 623 | 338 | 124 | 979 |

| CloudWays AWS | 56946 | 737 | 74 | 949 | 13 | 3 | 73 |

| CloudWays DO | 52124 | 1565 | 1499 | 869 | 35 | 23 | 87 |

| CloudWays GCE | 50463 | 1797 | 782 | 841 | 96 | 92 | 138 |

| DreamHost | 58584 | 1 | 0 | 978 | 4 | 4 | 4 |

| FlyWheel | 49960 | 3596 | 2022 | 833 | 30 | 24 | 140 |

| GoDaddy | 29611 | 26024 | 18 | 494 | 165 | 103 | 622 |

| Kinsta | 57723 | 1 | 0 | 962 | 20 | 20 | 21 |

| LightningBase | 54448 | 1 | 4 | 907 | 81 | 81 | 81 |

| MediaTemple | 29649 | 25356 | 126 | 494 | 162 | 104 | 1103 |

| Nexcess | 38616 | 4924 | 2200 | 644 | 221 | 70 | 414 |

| Pagely | 58722 | 1 | 0 | 979 | 3 | 2 | 5 |

| Pantheon | 55814 | 112 | 9 | 930 | 52 | 52 | 54 |

| Pressidium | 47567 | 1 | 9 | 793 | 233 | 233 | 234 |

| PressLabs | 58626 | 0 | 0 | 977 | 5 | 4 | 6 |

| SiteGround Shared | 49127 | 1123 | 1 | 819 | 172 | 171 | 178 |

| SiteGround VPS | 35721 | 75 | 4371 | 595 | 238 | 82 | 491 |

| WebHostingBuzz | |||||||

| WPEngine | 56277 | 827 | 1 | 938 | 27 | 21 | 70 |

| WPOven.com | 55027 | 10 | 2 | 917 | 69 | 68 | 71 |

| WPPronto | 54921 | 99 | 29 | 915 | 69 | 68 | 72 |

Discussion of Blitz Test 1 Results

This test is just testing whether the company is caching the front page and how well whatever caching system they have setup is performing (generally this hits something like Varnish or Nginx).

Who performed without any major issues?

DreamHost, Kinsta, LightningBase, Pagely, Pantheon, Pressidium, PressLabs, WPOven, WPPronto all performed near perfect. There's nothing more to say for these companies other than they did excellent.

Who had some minor issues?

A Small Orange started showing signs of load towards the end. CloudWays AWS had a spike and started to show signs of load towards the end. SiteGround Shared had a spike at the end that ruined a very beautiful looking run otherwise. WPEngine started to show signs of load towards the end of the test.

Who had some major issues?

BlueHost, CloudWays DO, CloudWays GCE, FlyWheel, GoDaddy, MediaTemple, Nexcess, and SiteGround VPS had some major issues. The CloudWays platform pushed a ton of requests (the only companies over 50,000) but also had a lot of errors and timeouts. The rest were below 50,000 (although FlyWheel was only a hair behind) and also had a lot of errors and timeouts. SiteGround VPS might be an example of how shared resources can get better performance versus dedicated resources. GoDaddy and Media Temple have near identical performance (again, it's the same technology I believe). Both look perfect until near the end where they crash and start erroring out. Nexcess just shows load taking its toll.

Uptime Monitoring

Both uptime monitoring solutions were third party providers that offer free services. All the companies were monitored over an entire month+ (May-June 2015).

| Uptime (30 Day) | |

| A Small Orange | 100 |

| BlueHost | 100 |

| CloudWays AWS | 100 |

| CloudWays DO | 100 |

| CloudWays GCE | 100 |

| DreamHost | 94.06 |

| FlyWheel | 100 |

| GoDaddy | 100 |

| Kinsta | 100 |

| LightningBase | 100 |

| MediaTemple | 100 |

| Nexcess | 100 |

| Pagely | 100 |

| Pantheon | 99.94 |

| Pressidium | 100 |

| PressLabs | 100 |

| SiteGround Shared | 100 |

| SiteGround VPS | 100 |

| WebHostingBuzz | 42.9 |

| WPEngine | 100 |

| WPOven.com | 100 |

| WPPronto | 100 |

At this point, I will finally address the odd elephant in the blog post. WebHostingBuzz has empty lines for all the previous tests. Why? Because their service went down and never came back online. I was told that I put an incorrect IP address for the DNS. However, that IP worked when I started and was the IP address I was originally given (hence the 42% uptime, it was online when I started testing). It took weeks to even get a response and once I corrected the IP, all it ever got was a configuration error page from the server. I've not received a response yet about this issue and have written them off as untestable.

The only other company that had any major issue was DreamHost. I'm not sure what happened, but they experienced some severe downtime while I was testing the system and returned an internal server error for 42 hours.

Every other company had 99.9% uptime or better.

StatusCake had a slightly longer window available from their reporting interface, so the percentages are a little bit different and noticeable on companies like DreamHost.

| StatusCake | Availability (%) | Response Time (ms) |

| A Small Orange | 99.96 | 0.21 |

| BlueHost | 99.99 | 0.93 |

| CloudWays AWS | 100 | 0.76 |

| CloudWays DO | 100 | 0.47 |

| CloudWays GCE | 100 | 0.69 |

| DreamHost | 97.14 | 1.11 |

| FlyWheel | 100 | 1.25 |

| GoDaddy | 100 | 0.65 |

| Kinsta | 100 | 0.71 |

| LightningBase | 99.99 | 0.61 |

| MediaTemple | 100 | 1.38 |

| Nexcess | 100 | 0.61 |

| Pagely | 99.99 | 0.47 |

| Pantheon | 99.98 | 0.56 |

| Pressidium | 99.99 | 0.94 |

| PressLabs | 100 | 0.65 |

| SiteGround Shared | 100 | 0.54 |

| SiteGround VPS | 100 | 0.9 |

| WebHostingBuzz | 58.1 | 0.67 |

| WPEngine | 100 | 0.71 |

| WPOven.com | 100 | 0.73 |

| WPPronto | 100 | 1.19 |

The results mirror UptimeRobot pretty closely. WebHostingBuzz and DreamHost had issues. Everyone else is 99.9% or better.

StatusCake uses a real browser to track response time as well. Compared to last year, everything looks faster. Only two companies were sub one second average response time last year. This year, almost every company maintained sub one second response time, even the company that had servers in Europe (Pressidium).

WebPageTest.org

Every test was run with the settings: Chrome Browser, 9 Runs, native connection (no traffic shaping), first view only.

| Company | Dulles,VA | Miami, FL | Denver, CO | Los Angeles, CA | Average |

| A Small Orange | 0.624 | 0.709 | 0.391 | 0.8 | 0.631 |

| BlueHost | 0.909 | 1.092 | 0.527 | 0.748 | 0.819 |

| CloudWays AWS | 0.627 | 0.748 | 0.694 | 1.031 | 0.775 |

| CloudWays DO | 0.605 | 0.751 | 0.635 | 1.075 | 0.7665 |

| CloudWays GCE | 0.787 | 0.858 | 0.588 | 1.019 | 0.813 |

| DreamHost | 0.415 | 0.648 | 0.522 | 0.919 | 0.626 |

| FlyWheel | 0.509 | 0.547 | 0.594 | 0.856 | 0.6265 |

| GoDaddy | 0.816 | 1.247 | 0.917 | 0.672 | 0.913 |

| Kinsta | 0.574 | 0.559 | 0.587 | 0.903 | 0.65575 |

| LightningBase | 0.544 | 0.656 | 0.5 | 0.616 | 0.579 |

| MediaTemple | 0.822 | 0.975 | 0.983 | 0.584 | 0.841 |

| Nexcess | 0.712 | 0.871 | 0.593 | 0.795 | 0.74275 |

| Pagely | 0.547 | 0.553 | 0.665 | 0.601 | 0.5915 |

| Pantheon | 0.627 | 0.567 | 0.474 | 0.67 | 0.5845 |

| Pressidium | 0.777 | 0.945 | 0.898 | 1.05 | 0.9175 |

| PressLabs | 0.542 | 1.257 | 0.723 | 0.732 | 0.8135 |

| SiteGround Shared | 0.721 | 0.85 | 0.478 | 0.808 | 0.71425 |

| SiteGround VPS | 0.667 | 0.651 | 0.515 | 0.657 | 0.6225 |

| WebHostingBuzz | 0 | ||||

| WPEngine | 0.648 | 0.554 | 0.588 | 0.816 | 0.6515 |

| WPOven.com | 0.624 | 0.574 | 0.556 | 0.595 | 0.58725 |

| WPPronto | 0.698 | 0.809 | 0.443 | 0.721 | 0.66775 |

In line with the StatusCake results, the WebPageTest results were shockingly fast. The first time I did this testing, only one company had a sub one second average response time. Last year about half the companies were over one second average response time. The fastest last year was LightningBase at 0.7455 seconds. This year that would be in the slower half of the results. The fastest this year was LightningBase again at 0.579 seconds. The good news for consumers appears to be that everyone is getting faster and your content will get to consumers faster than ever no matter who you choose.

WPPerformanceTester

| Company | PHP Ver | MySql Ver | PHP Bench | WP Bench | MySql |

| A Small Orange | 5.5.24 | 5.5.42-MariaDB | 13.441 | 406.67 | LOCALHOST |

| BlueHost | 5.4.28 | 5.5.42 | 12.217 | 738.01 | LOCALHOST |

| CloudWays AWS | 5.5.26 | 5.5.43 | 10.808 | 220.12 | LOCALHOST |

| CloudWays DO | 5.5.26 | 5.5.43 | 11.888 | 146.76 | LOCALHOST |

| CloudWays GCE | 5.5.26 | 5.5.43 | 10.617 | 192.2 | LOCALHOST |

| DreamHost | 5.5.26 | 5.1.39 | 27.144 | 298.6 | REMOTE |

| FlyWheel | 5.5.26 | 5.5.43 | 12.082 | 105.76 | LOCALHOST |

| GoDaddy | 5.4.16 | 5.5.40 | 11.846 | 365.76 | REMOTE |

| Kinsta | 5.6.7 | 10.0.17-MariaDB | 11.198 | 619.58 | LOCALHOST |

| LightningBase | 5.5.24 | 5.5.42 | 12.369 | 768.64 | LOCALHOST |

| MediaTemple | 5.4.16 | 5.5.37 | 12.578 | 333.33 | REMOTE |

| Nexcess | 5.3.24 | 5.6.23 | 12.276 | 421.76 | LOCALHOST |

| Pagely | 5.5.22 | 5.6.19 | 10.791 | 79.79 | REMOTE |

| Pantheon | 5.5.24 | 5.5.337-MariaDB | 12.669 | 194.86 | REMOTE |

| Pressidium | 5.5.23 | 5.6.22 | 11.551 | 327.76 | LOCALHOST |

| PressLabs | 5.6.1 | 5.5.43 | 8.918 | 527.7 | REMOTE |

| SiteGround Shared | 5.5.25 | 5.5.40 | 14.171 | 788.02 | LOCALHOST |

| SiteGround VPS | 5.6.99 | 5.5.31 | 11.156 | 350.51 | LOCALHOST |

| WebHostingBuzz | |||||

| WPEngine | 5.5.9 | 5.6.24 | 10.97 | 597.37 | LOCALHOST |

| WPOven.com | 5.3.1 | 5.5.43 | 11.6 | 570.13 | LOCALHOST |

| WPPronto | 5.5.25 | 5.5.42 | 11.485 | 889.68 | LOCALHOST |

This test is of my own creation. I created a plugin designed to test a few aspects of performance and get information about the system it was running on. The results here have no bearing on how I am evaluating these companies because I don't have enough details to make these meaningful. My goal is to publish the plugin and get people to submit their own benchmarks though. This would allow me to get a better picture of the real performance people are experiencing from companies and track changes over time. The server details it extracted may be of some interest to many people. Most companies were running PHP 5.5 or later but a few aren't. Most companies seem to be running normal MySql, but ASO, Kinsta and Pantheon all are running MariaDB which many people think has better performance. Considering where all three of those companies ended up performing on these tests, it's not hard to believe. There seems to be an even split between running MySql localhost (BlueHost, LightningBase, Nexcess, SiteGround, WPEngine, WPPronto) or having a remote MySql server (DreamHost, GoDaddy, MediaTemple, Pagely, Pantheon, PressLabs).

The PHP Bench was fascinating because most companies were pretty close with the exception of DreamHost which took nearly twice as long to execute.

The WP Bench was all over the place. Pagely had by far the slowest result but on every load test and speed test they went through, they performed with near perfect scores. The test simulates 1000 $wpdb calls doing the primary mysql functions (insert, select, update, delete). Other companies had outrageously fast scores like WPPronto or BlueHost but didn't perform anywhere near as well as Pagely on more established tests.

For those reasons, I don't think this benchmark is usable yet. But I would love feedback and thoughts on it from the community and the hosting companies themselves.

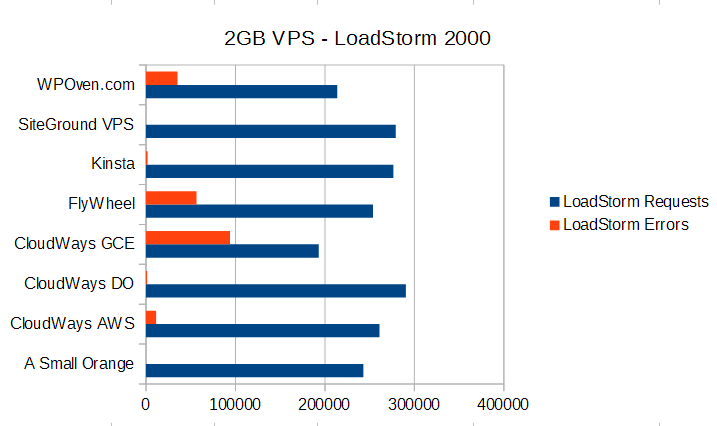

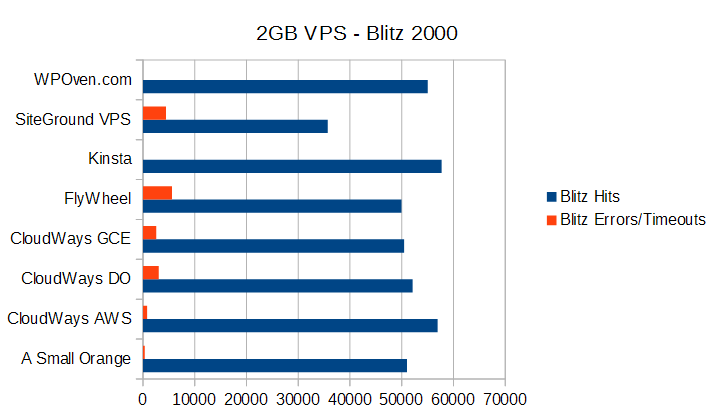

Attempting VPS Parity in Testing

One substantial change to the testing methodology this round was that all VPS providers were tested with the same amount of memory (2 GB Ram). Since the most interesting tests were the load tests I have only graphed them below:

The LoadStorm test had a huge spread in terms of performance. The Google Compute Engine server from CloudWays was by far the worst (an issue we touched on before that it's not a true VPS with dedicated resources). FlyWheel and WPOven also struggled to keep up with the LoadStorm test. Others like ASO, CloudWays DO, Kinsta, and SiteGround handled the test with minimal issues. On the other hand, it's very interesting to see how fairly consistent most of the VPSs perform in the Blitz test between 50,000 and roughly 55,000 hits. The error rates are a bit interesting though because this hardware should be about as close to the same as possible.

The easier result to explain is the Blitz performance. It is testing the ability of these companies to spit back a single page from cache (most likely Varnish or Nginx). So that level of caching seems to be pretty close to parity.

The LoadStorm test shows a wide difference in performance. The LoadStorm test is far more comprehensive and designed to bust through some caching and hit other parts of the stack. It really elucidates the difference in company's ability to tune and optimize their servers from both software and hardware perspectives.

Conclusion

Every service seems to have their issues somewhere if you look hard enough. I try to avoid injecting my personal opinion and bias as much as possible. As I've added more companies to the testing, drawing a line between which companies performed in the top tier and which did not has become blurrier. The closest test was the LoadStorm 2000 test where multiple companies (CloudWays DO, GoDaddy, Kinsta, Media Temple, SiteGround Shared) were on the absolute edge of being top tier providers. Last time I picked an arbitrary 0.5% error rate and these companies were all around the 0.5-0.7% mark. Last year the difference was quite large after that point. I openly admit to having personal connections with people at nearly all these companies and my ability to draw the line in this instance could be considered questionable. So this year I deferred the judgment to an independent party, Phillip Odom at LoadStorm, to determine what he thought of the performances. Phillip is the Director of Performance Engineering at LoadStorm and he has more experience with load testing and the LoadStorm product than almost anyone I know. His job was to determine if the performance could be considered top tier or not. He said a couple spikes early but a stable performance otherwise seemed top tier. The difference in 1/100 of a percent didn't seem like a big deal, especially over a 30 minute test where the issues were at the start as it ramped up to 2000 concurrent users. So the companies on the edge that exhibited that behavior were considered top tier for the LoadStorm test.

I won't be ranking or outright saying any single company is the best. Some providers did exceptionally well and tended to clump together performance-wise, I will call those the top tier providers. This top tier designation is related to performance only and is claimed only from the results of these tests. What each of these companies is offering is different and may best suit different audiences depending on a variety of factors beyond performance, such as features, price, support, and scale (I tested mostly entry level plans OR 2GB RAM plans for VPS providers). I will also provide a short summary and discussion of the results for each provider.

Top Tier WordPress Hosting Performance

A Small Orange, Kinsta, LightningBase, Pagely, Pantheon, Pressidium, PressLabs

Each of these companies performed with little to no failures in all tests and exhibited best in class performance for WordPress hosting.

Honorable Mentions

CloudWays gets an honorable mention because it's Digital Ocean (DO) instance performed quite well overall. It had some issue with the Blitz test at the end but still managed to push through over 52,000 successful hits. It's Amazon stack performed better on the Blitz test but not as well on LoadStorm. I'm not sure why the performance of identical stacks is differing across tests so much between AWS/DO but they improved dramatically since the last test and are on the cusp of becoming a top tier provider.

SiteGround's Shared hosting also gets an honorable mention. It was on that edge for both LoadStorm and Blitz. It had one spike at the end of the Blitz test which caused it's error rate to spike but the response times didn't move.

WPEngine gets an honorable mention because they performed well on most tests. They struggled and were showing signs of load on the Blitz test though that kept them out of the top tier of providers.

Individual Host Analysis

Another top tier performance from ASO. They didn't really struggle much with any of the tests. Although their performances were slightly below their results last time, it's hard to beat things like having zero errors during LoadStorm's test. It's become easier to launch the LEMP VPS stack which is also nice. All in all, the experience was in-line with what I would expect from a company that has one of the highest support ratings on our site.

Improved against their last results but well below par in the performance department. The pricing and performance just don't match yet.

CloudWays is always a fun company to test. They added another provider since their last test: Google Compute Engine (GCE). Their Digital Ocean and Amazon performances both went up substantially which tells me they've made major improvements on their WordPress stack. We did run into some huge flaws in GCE though which aren't CloudWays's fault. We used the g1.small server on GCE and ran into huge performance walls that were repeatable and inexplicable from a software standpoint. Google was contacted and we learned that the "g1 family has "fractional" CPU, meaning that not a full virtual CPU is assigned to a server. This also means that the CPU is shared with other VMs and "capped" if usage exceeds a certain amount. This is exactly what happened during the load test. The VM runs out of CPU cycles and has to wait for new ones being assigned on the shared CPU to continue to server requests." Essentially, it's not a real VPS with dedicated resources and I was told a comparable would be N1.standard1 which is 2-3x the price of the AWS/DO comparables servers. It doesn't make GCE a very attractive platform to host on if you're looking for performance and cost efficiency. CloudWays did show major improvements this round and earned themselves that honorable mention. They were by far the most improved provider between tests.

DreamPress improved their performance a lot over last round. In fact they did fantastically well on every load test once I got the opportunity to actually work with their engineers to bypass the security measures. However, they failed pretty badly on the uptime metrics. I have no idea what happened but I experienced a huge amount of downtime and ran into some very strange errors. If it wasn't for the severe downtime issues, DreamPress could have been in the top tier.

FlyWheel were excellent on every test except the final seconds of the Blitz test. Although they were just shy of the top tier, they are showing a lot of consistency in very good performance getting an honorable mention the last two times. Just some minor performance kinks to work out. Not bad at all for a company with the best reviews of any company Review Signal has ever tracked. FlyWheel is definitely worth a look.

GoDaddy's performance declined this round. It struggled with the Blitz test this time around. I'm not sure what changed, but it handled Blitz far worse than before and LoadStorm slightly worse. The performance between GoDaddy and Media Temple again looked near identical with the same failure points on Blitz. At the retail $6.99 price though, it's still a lot of bang for your buck compared to most providers who are in the $20-30/month range.

Kinsta had another top tier performance. There was a slight decline in performance but that could be explained by the fact we tested different products. Kinsta's test last year was a Shared plan they no longer offer. This year it was a 2GB VPS that we tested. Dedicated resources are great but sometimes shared gives you a little bit extra with good neighbors which could explain the difference. Either way, Kinsta handled all of the tests exceptionally well and earned itself top tier status.

LightningBase is another consistent performer on our list. Another test, another top tier rank earned. It had ridiculous consistency with the Blitz test where the fastest and slowest response were both 81ms. A textbook performance at incredible value of $9.95/month.

Media Temple and GoDaddy are still running the same platform by all indications. Media Temple offers a more premium set of features like Git, WP-CLI, Staging but the performance was identical. It declined from last time and had the same bottlenecks as GoDaddy.

I feel like copy and paste is the right move for Nexcess. Nexcess's performance was excellent in the Load Storm testing. However, it collapsed during the Blitz load testing. This was the same behavior as last year. It handled the Blitz test better this year, but still not well enough. Nexcess ends up looking like a middle of the pack web host instead of a top tier one because of the Blitz test, again.

Is the extra money worth it? Only if you value perfection. Pagely came through again with an amazing set of results. It handled more hits than anyone in the Blitz test at a staggering 58,722 hits in 60 seconds (979 hits/second). We're approaching the theoretical maximum at this point of 1000 hits/second. And Pagely did it with 1 error and a 3ms performance difference from the fastest to slowest responses. The original managed WordPress company continues to put on dominant performance results.

Another test, another top tier performance. Just another day being one of the most respected web hosts in the space. Everyone I talk to wants to compare their company to these guys. It's obvious why, they've built a very developer/agency friendly platform that looks nothing like anything else on the market. It also performs fantastically well. They didn't perform the absolute best on any particular test but they were right in the top echelon with minimal errors on everything.

Pressidium was a new entrant and it did exceptionally well. They are UK based and suffered slightly on some performance tests because of latency between the UK and the US testing locations used. For example, the Blitz testing showed fewer responses, but their total of 10 errors shows pretty clearly that it was a throughput across the Atlantic ocean issue more than their service struggling because it had a 1 second spread from the fastest to slowest response. Incredibly consistent performance. Despite their geographic disadvantage in this testing they still managed to keep a sub-one second response from four US testing locations in the WebPageTest testing. Overall, a top tier performance from a competitor from across the pond.

We finally got PressLabs working with the LoadStorm testing software. And it was worth the wait. They were the only company to handle the 2000 logged in user test with zero errors. Combined with the second fastest Blitz test (again without a single error) puts PressLabs firmly in the top tier as you would expect from the most expensive offering tested this round.

It was nice that we finally worked out the security issues in testing with LoadStorm on SiteGround. SiteGround's Shared hosting platform bounced back after last years testing. Their Blitz performance went up substantially and put it back into the honorable mention category. The VPS performance was slightly worse on the Blitz test, but noticeably better on the much longer LoadStorm test. This could be a good example of when Shared hosting can outperform dedicated resources because Shared hosting generally has access to a lot more resources than smaller VPS plans. Depending on how they are setup and managed, you can often get more burst performance from Shared over a small VPS. But in the longer term, dedicated resources are generally more stable (and guaranteed). SiteGround's Shared hosting definitely helps keep the lower priced options with excellent performance a reality for many.

WebHostingBuzz asked to be included in this testing and then completely disintegrated to the point I couldn't even test them. I still never heard anything from them for months. I would like to know what happened, but until I actually get a response, this one will remain a bizarre mystery.

This is a difficult one to write about. There are definitely performance improvements that occurred. They jumped up to an honorable mention. Their engineers actually worked to resolve some security issues that hindered previous testing. My biggest concern is the isolated shared environment I was on. A shared environment has a lot more resources than many dedicated environments and I was isolated away to prevent the testing from affecting any customers (which is a reasonable explanation). But that means I was likely to be getting the absolute dream scenario in terms of resource allocation, so a normal user would see this in the very best case scenario. So WPEngine is certainly capable of delivering better performance than they did in the past, but I do have concerns about the reasonable expectation of a new user getting the same results.

WPOven was another new entrant to this testing and they performed well in a couple tests. They flew through the Blitz test without any issues. Their WebPageTest results were one of the absolute fastest in an already fast pack. Their uptime was perfect. They did struggle with the LoadStorm tests though both at the 1000 and 2000 user levels. It's nice to see more competitors enter the space, WPOven put on a good first show, but there is still some serious improvements to make to catch up to the front of the field.

Another new entrant who ran into a severe testing issue which caused me to re-do all the tests. The server was given more resources than the plan specified while debugging some security issues. The results on the extra resources were on par with some of the top in the field, but not representative of what the actual plan would be able to achieve. I didn't believe it was malicious (they were quite transparent about what happened), so I gave the benefit of the doubt and re-did all testing in a closely monitored condition. With the default resource allocation, WPPronto couldn't withstand LoadStorm's test. The results were pretty easy to see in the 508 errors it started to throw on the properly resourced plan. It ran out of processes to handle new connections as expected. As with all new entrants that don't leap to the forefront, I hope they continue to improve their service and do better next round.

Thank You

Thank you to all the companies for participating and helping make this testing a reality. Thanks to LoadStorm and specifically Phillip Odom for all his time and the tools to perform this testing. Thanks to Peter at Kinsta for offering his design support.

Updates

8/13/2015 : The wrong PDF was linked for DreamHost and its Blitz numbers were adjusted to reflect their actual performance. This change has no effect on how they were ranked since the issue was with downtime.

Kevin Ohashi

Latest posts by Kevin Ohashi (see all)

- Analyzing Digital Ocean’s First Major Move with Cloudways - February 28, 2023

- Removing old companies - June 28, 2021

- WordPress & WooCommerce Hosting Performance Benchmarks 2021 - May 27, 2021

WordPress & WooCommerce Hosting Performance Benchmarks 2021

WordPress & WooCommerce Hosting Performance Benchmarks 2021 WooCommerce Hosting Performance Benchmarks 2020

WooCommerce Hosting Performance Benchmarks 2020 WordPress Hosting Performance Benchmarks (2020)

WordPress Hosting Performance Benchmarks (2020) The Case for Regulatory Capture at ICANN

The Case for Regulatory Capture at ICANN WordPress Hosting – Does Price Give Better Performance?

WordPress Hosting – Does Price Give Better Performance? Hostinger Review – 0 Stars for Lack of Ethics

Hostinger Review – 0 Stars for Lack of Ethics The Sinking of Site5 – Tracking EIG Brands Post Acquisition

The Sinking of Site5 – Tracking EIG Brands Post Acquisition Dirty, Slimy, Shady Secrets of the Web Hosting Review (Under)World – Episode 1

Dirty, Slimy, Shady Secrets of the Web Hosting Review (Under)World – Episode 1 Free Web Hosting Offers for Startups

Free Web Hosting Offers for Startups

Pingback: WordPress Hosting Performance Benchmarks (November 2014) | Review Signal Blog

Kevin

Great comparison & discovered many new hosting companies which can deliver better results.

One question: When you talk about Godaddy, Are you testing on their managed WordPress hosting??

Also how about Hostgator Optimized WordPress hosting which they launched few weeks back?

Harsh,

It’s the managed WordPress hosting from GoDaddy.

This post took months to put together, I was pretty much done testing by the time HostGator released their offering. I’ve added them to the list for potential companies next year if they are interested.

Hey Kevin,

Nice list. Good to see ASO up on top 🙂 It’ll be better to see HostGator up there next year. Feel free to message me about it and I can get you setup.

Thanks for reaching out Kyler, I’ll put your contact info down for next year and make sure I reach out to you for inclusion in the 2016 Edition.

Thanks again Kevin for what I think is simply the best review anywhere for managed WP!

I’m not quite through reading it all, actually, but just found a tiny small detail you might want to change: GoDaddy Managed WordPress actually does offer staging (only one staging site, not two like Media Temple), and they also have GIT enabled.

Phil,

That feature spreadsheet was filled out by the companies themselves. Perhaps their offer changed from when it was filled out. I really wish I had a better way to present and maintain such a giant table of data. Problem is pretty much as soon as I publish, little details become out of date 🙁 It’s good to have comments like yours though to help inform everyone of updates and changes. So thank you!

Kevin,

maybe even some folks at GoDaddy (and Media Temple, for that matter) don’t know all about their product.

Actually, this is one of the main reasons I’m currently highly interested in Pressidium, and will probably soon host my clients there: their support is first-rate, by the highly knowledgeable founders themselves, not by some salesperson.

Also, I found that GD’s offer has become quite a bit less attractive, with higher prices and lower max numbers of visits/months, which apparently they now enforce, as there’s an “overage warranty” one can buy – a year ago, they themselves said it was essentially “unlimited”.

What I also like about Pressidium: if by some luck one’s account does go over the (admittedly rather low) max number of visits/month, they won’t shut you down or ask for more money immediately. Flexibility in Managed WordPress, now there’s a novel thing! 🙂

Thanks again for your hard work!

Also switched about 5 sites from Mediatemple to Siteground. So far pleased with the support. Its not ideal, but much better over Mediaemple in our experience. Which is a big performance metric for us that will trump many speed tests 🙂

Really great, Kevin. I found the data and your thoughts on SiteGround interesting (and comforting) since I’m switching to them from Media Temple’s unmanaged Grid for a few sites.

Sooooooo good. These articles are invaluable. Thanks man!

This is a really professional and helpful report. Transferring judgement to independent party in person of Phillip Odom from LoadStorm gives more credibility to these results.

Thank you very much for your hard work guys. Respect.

I love the in-depth approach of your analysis. The only drawback I see is the possibility that some of the hosts gave you a supercharged account to get better results. Kinda like what WP Engine did, but without you knowing. To be honest, having a shared hosting without having to share it with anyone invalidates the test, because in real life you will never see that happen, hence you’ll never be able to see those results in practice.

Ideally you could either compare the test account with a real one, or conduct the test without the hosts being aware.

Looking forward to the next round!

Nemanja,

You’re absolutely correct. And I do my best to prevent that. There was one discrepancy where I found a host had accidentally given me more resources and I re did the entire testing keeping a close on it again. I do my best to detect these things. It’s a big deal for shared, but for the VPS instances it was a lot easier to check the resources and confirm I was only given the proper amount. I do my best, it’s not perfect, if that confidence isn’t enough for shared hosting for you, I would just look at the VPS performance then. I think it’s ok for Shared, but that’s my opinion and you’re entitled to your own.

As far as doing it blind, I’ve tried. There are a few issues. The biggest two are cost (some of these companies cost hundreds per month to get accounts) and security. I need permission from their sysadmins to let my load testing through in a lot of cases. What I do also has the potential to affect real customers on their network which is something I don’t want to do. So having the companies working with me protects them better.

I don’t disagree with you for a second that blind testing would be better, I just simply don’t have the resources and can’t bypass the security measures without them realizing what I’m doing. If you’ve got ideas for solving any or both of those issues, I’m always happy to hear them!

Actually, I do 🙂 Will follow up directly in the near future.

I look forward to it, if you want to do so privately kevin at reviewsignal.

Hi Kevin,

Great review! Out of interest what was each site running? I see you mention each site was running the same dummy content and plugins, but do you have a list of the plugins and the theme that was used? (Sorry if I missed that in the post).

Thanks!

Thanks Jack.

The dummy sites were literally almost nothing. Just a few pages on Twenty Fourteen theme with lorem ipsum text, a couple dummy images. The plugins were only what the hosting company installed actively running. I also had the import plugin, a user role manager and my custom WPPerformanceTester which was added after load testing. None of those plugins run on the frontend at all.

You can still see one of the test sites that is up (with a domain I chuckle about every time): http://kevinohashibenchmark.com/

why wasnt http://websynthesis.com/ put on the list,??????

I addressed this at the beginning. They didn’t want to participate. They were included in Round 2 if you’re curious how they performed. They did very well in that round. https://reviewsignal.com/blog/2014/11/03/wordpress-hosting-performance-benchmarks-november-2014/

you forgot to rate them in order, for your users convenience

I rated them in groupings, I don’t think there is any set order that could be fairly applied.

Kevin –

Great work. Easily the most thorough hosting review I’ve seen yet (dating back to 2002!). The biggest problem with hosting reviews is all the affiliates supporting whoever pays them the most. While I see you’re tracking links here, I’m confident you’ve been at least marginally neutral, and that’s more than I can say for many others before you.

I’m currently running a site on Media Temple’s shared hosting. It’s a decent service. The control panel is a custom build and takes some getting used to. Their support is always available and while they rarely know the answers to simple questions – like where my own php.ini file was – right away, they don’t pretend to be smart and give you bogus info. They also go get the right info for you and are pleasant to deal with.

However, and this is for their GRID hosting, even handling just a hundred visitors at once with more than 1,000 posts in the database brings things to a crawl. A second site on the same type of hosting and running the same theme and plugins but with just 20 posts in the database loads every page in 1/4 the time.

Cloudways looks good; like they’re on the rise actually. Sure beats catching them at the beginning of their decline like I did with Host Gator a few years back. But A Small Orange’s VPS cloud hosting is going to get a shot from me. I like having my sites hosted at a datacenter located less than 5 miles from my home office. Gives me the hands-on element I prefer.

Any tips on their VPS Cloud packages, or any tier thereof, that you can pass on? My main site is a six-year-old WordPress site with a recently cleaned DB, but PHP frameworks with MySQL are my main focus. Any advice is appreciated.

Thanks!

Thank you for the high praise!

Affiliate money can corrupt (and does in most cases in the web hosting review industry). You’re on point. That said, it’s also a really practical way to make money and I think if done correctly can make perfect sense. Full disclaimer, you’re right, I do have affiliate deals with some of the companies listed. Every company listed I have deals with except Kinsta, Pantheon, PressLabs, and WebHostingBuzz. If anyone wants to claim money is corrupting these rankings (And I don’t think you are making that claim at all, it’s just a common one), then I’m doing a terrible job at it. 3/7 of the top tier don’t have affiliate programs that I’m aware of and I make nothing from people signing up with them. I do these reviews very publicly and as transparently as possible with people from every company involved commenting, giving feedback and even providing advice on how they are done so that they are tested fairly compared to their competitors. It’s probably not perfect, but I’m happy to explain every decision I made and disclose everything possible so that these tests are repeatable by anyone.

If you’re committed to giving ASO a try make sure you use their WordPress optimized VPS template. It’s what I ran the testing on and it’s blazing fast. It doesn’t have the normal cPanel control panel or anything though. So I hope you’re fairly comfortable with a command line. This testing was on the 2GB plan. I’d probably start there and see how it performs, $30/month puts in in class with the original shared offerings around that price point. If it isn’t fast enough, you could try emailing their support and asking for Ryan MacDonald, he’s their performance guru over there.

Pingback: WordPress database queries are getting 3x-4x faster on Pagely | Pagely® WordPress Hosting

Some interesting results and a couple new names to play with. Thanks for sharing!

Great comparison. However, I don’t see some big names in the list like DigitalOcean. Did you miss it?

Digital Ocean doesn’t really offer a managed high performance WordPress. I tested them in the first edition of this way way back (actual testing was in 2013). You can get a link at the top of this article if you want to read. The short summary is they are a hardware/VPS provider, not a WordPress provider. Don’t expect them WordPress stack to perform well. Lots of companies listed here are deploying on Digital Ocean and get phenomenal results, so the hardware is capable there. It just needs to be setup by experts.

would love to see comparisons of these hosts for 100% HTTPS =)

Maybe next year 🙂

Pingback: Tips Tuesday - Stop Ghost Spam, Hosting Performance Tests, SEO Trends - BlogAid

Pingback: WordPress Performance Benchmarks 2015 | WPShout

What about DO and ServerPilot? It’s a configuration I’d love to see compared to the others here. Right now, I’ve narrowed it down to that, LightningBase, Flywheel, and Pressidium. Pressidium seems to hit the price vs. quality sweet spot, but I feel weird purchasing hosting with servers in the UK (despite your findings here).

I tried Digital Ocean as a stand alone and they really aren’t in the same business. It’s a pretty default LAMP stack which just won’t hold up to these kinds of tests. That said, multiple companies in this test were using Digital Ocean for their servers (CloudWays, FlyWheel and I’m probably forgetting some others).

As far as ServerPilot goes, it’s not really a hosting company, so I didn’t include it in this test. There are a lot of configurations out there if you go the DIY route like ServerPilot, but I don’t think they quite fit under this test. I am thinking about testing them separately though with competitors like easyEngine and other public configs.

Makes sense. I’ve seen a few different comparisons between Cloudways and ServerPilot and ServerPilot seems to perform better, even without Varnish. How do you think DO/ServerPilot would stack up against something like Flywheel or LightningBase if you did happen to run them against each other? I know it’s all hypothetical and a guessing game… just looking for an opinion.

I have never used ServerPilot, so I don’t know. CloudWays generally performed better than FlyWheel, so if you’re saying ServerPilot outperformed them, I would suspect it would be better. LightningBase has been in the top tier for a while though, so that would be impressive if it outperformed them.

FYI, I did quite a few tests of Serverpilot installs on DO, Linode and Vultr. The standard install (NGiNX in front of Apache) is lightning fast, especially if NGiNX serves cached html documents (created by e.g. WP Rocket or WP SuperCache). However, when testing with Blitz.io, it usually crashed at around 200 concurrent users, as Apache was somehow still eating RAM like crazy.

When the setup was changed to a pure LEMP stack (no Apache), it held up a lot better, till around 900 concurrent users, or ~20 million visitors/day. That’s not too shabby.

What was really noticeable: the WordPress backend was incredibly fast on Vultr and Linode LEMP setups (I wouldn’t recommend DO), faster than any other I’ve ever seen, even better than Kinsta. If you or your clients are working a lot inside WP, that’s really nice to have.

Oh, and a quick note re: Pressidium – they are planning to expand and have US-based data center soon, as far as I know.

Thanks for sharing those results Phil. Have you published/documented them anywhere?

Thanks Kevin, good stuff!

what about custom shops like isprime.com? they host some big fish afaik. i think having metrics for their type of managed wp product would be interesting.

I don’t see any information about managed WordPress hosting there?

you’re right, from what i understand you’d get a managed vps or dedicated server, and have them install their wp stack on there. i also found isprime.com/wordpress.html

Ha Kevin,

Thanks a lot for this elaborate analysis, which helps to make an educated choice between hosting providers. I am wondering, did you have any experience with http://www.fastcomet.com/? I wonder how they are performing compared to others.

Never heard of them. I put out an open call in the WordPress community to nominate companies before I started the last round to make sure I got as many as possible that are truly in the playing field. A lot of companies offer ‘WordPress Hosting’ but most don’t really do much special beyond an installer. I don’t know if that’s what FastComet is, but unless they reach out or you can tell me they are the real deal, I can’t simply include everyone that says they do ‘WordPress Hosting.’ My tests make companies look very bad if they aren’t really in high performance WP hosting business, and making companies look bad is not my goal. So if you’re using them, you can test for yourself using LoadStorm/Blitz/WebPageTest to see what the results look like. If you’re just curious, you can email/call/whatever them and let them know about these tests. I’ve been accepting any company that is interested in participating and respecting those that don’t wish to be included. So they are more than welcome to reach out to me for inclusion in the 2016 edition.

Pingback: The Power of DreamPress and Caching | Welcome to the Official DreamHost Blog

Pingback: The Worst Website Advice You’ll Ever Get - BlogAid

Hey Kevin,

Great job with the update I’m glad that you mentioned the difference between environment and VPS.

One thing I believe may have a unique circumstance that is not yet talked about to my knowledge.

WP engine is he’s going to keep shared resources from using more than a predetermined. I know that a regular WPE shared environment. Would be very powerful if 10 sites are going to get the best performance. They would not use more than

a certain amount of preset resources.

I Agree your findings and I’m happy to see the passwor your findings and I’m happy to see that PressLabs is proving to be an excellent company. Last but not least Pagely’s VPS is using Redis & HHVM it allso has some other things that I believe balance it out in terms of power.

Because I have the Pagely shared plan & VPS-1

In my testing is the VPS faster than the shared plan environment.

Is there a chance that you will offer the VPS in your next test? Please don’t get me wrong I agree with you 100% they are a force to reckon with and my favorite.

Great review I look forward to hearing more about WP engine environment keep in mind They have moved from Linode to Rackspace.

This too may have to do with their increased performance?

All the best,

Tom

Thanks Thomas.

VPS can definitely be faster, but shared can outperform smaller VPSs sometimes because there is a larger shared pool of resources at its disposal. That was all I was trying to communicate.

As far as testing goes, I honestly couldn’t tell you right now what next test will look like. I think some major changes are due to fit with how the market has evolved since I started. If you have some ideas, I’m all ears 🙂

Linode is pretty well known for good performance. Although, I have no idea how it would stack up against RackSpace. And your Pagely is due for a big performance increase as they switch to Aurora on Amazon.

Pingback: ARCOMPANY | 7 WordPress security tips for enterprise business

Pingback: Managing WordPress speed - with MainWP flavor

Great post. Can you review https://www.neolo.com next time? thanks!

Do they do managed WordPress?

Hi Kevin. Your reviews are great. I’m looking for a new host and have narrowed it down to Pantheon and Pressidium. Pressidium is more affordable as I need to host 3 sites. Does the fact that they are UK-based hurt performance for a primarily US audience? Their site shows servers in the US.

Casey,

Thanks!

They were very marginally slower than most of their US based peers to US locations. That said, look at the results in Blitz and LoadStorm. There is average, max and min on Blitz specifically. They had a 1ms spread, it was incredibly reliable, albeit slower because of the distance. But we’re not talking a lot of time in the grand scheme of things at 100-200ms difference. Worth asking them, they probably have some ways to speed up US based delivery (eg CDN, or new datacenter locations in US). I have to imagine they will be adding US locations because of the demand. Could have happened since I did my testing. I’d reach out to them directly first and see what they say.

Hey Kevin!

Any thoughts on GoDaddy’s latest managed WP hosting? Also, any suggestions if our website is catered to Malaysia/Singapore?

Leo,

Performance wise, it’s done very well in these tests in the past. It ran into some issues this time at the very end of some of our load tests. Talking with them team after these results were published, they think I triggered some security features rather than their servers not being able to handle it. It’s certainly plausible if you look at the results. As far as everything else connected to it, I can’t really say much. I have a lot of review data on GoDaddy but it’s not separated by which plan they are on. GoDaddy doesn’t have a great reputation and I know they are very actively working to try to change that around with the new team they’ve brought in. What would you experience as a customer? I’m not sure beyond pretty good performance.

If you’re in Malaysia/Singapore, I know Amazon has a data center in that region. So any company built on their infrastructure might fit well (albeit Amazon isn’t cheap). Pagely and CloudWays come to mind as both using Amazon.

Pingback: New Host: TrafficPlanetHosting, WPEngine, A Small Orange - Grunch

Thanks for this article!

TrafficPlanetHosting was my previous hosting, but they seem to have weird sleeping technology, where the website quickly goes to sleep or something, and then slow to wake up when you start surfing it. The speeds were all over the place.

Tried WPengine, wasn’t much faster for the price.

thanks to your article, i found ASmallorage. I signed up with their level II Cloud VPS, installed the wordpress lemp, and its super blazing fast, the speed is consistent. Loving it.

I wish there were a way for them to give you some credit for linking me to them.

I did provide their sales staff with a link to this article, so maybe they’ll give you a tight handshake or something ha.

thanks again for breaking all this down.

Glad it helped you Josh 🙂

Hey, Josh (and Kevin),

My wife and I were looking for managed WP systems when we came across the site.

Traffic Planet: that sounds like that (don’t know the specific phrasing) the virtual hosting allocation (I’m told) folks like BlueHost / HostMonster … and other economy hosting plans offer. It allocates memory to your site according to history and then pumps it up when they notice the spike. The only problem: that spike is usually because you had a post go viral or had marketing / advertising efforts thrown to it. Damn. Read good things about TPH, too.

Kevin: do you think this tech could also be found in others you have tested?

And. I’ll send you a beer if you test Traffic Planet next year!!!

Nat,

All the companies are opt-in. I’ve got them on my list for 2016, but if they aren’t interested, I won’t test them.

As far as the tech, it’s possible. Different companies are going to have different scaling strategies. I have no idea what they are using and how it compares to others, so I can’t really say anything definitive.

Pingback: WPPerformanceTester – A WordPress Plugin to Benchmark Server Performance | Review Signal Blog

Hi Kevin,

Love your work!

I was wondering : I’m looking at lightning base.

My audience is in France and their datacenter is in Chicago.

Even if you proofed theyre awesome, would I still get great performance that far from the server?

I know they provide a cdn but would LB + cdn outperform lets say futurehosting or knownhost in Londonien?

I don’t know futurehosting. KnownHost isn’t specialized in WordPress. If your option was specialized WP in US vs local normal hosting. I would generally expect latency to be smaller than slowness of a normal host. If you were configuring a lot of optimizations so your KnownHost (VPS I am assuming) is similar, the performance would probably be better locally.

You can look at Pressidium in the test results. Their plan was EU based and being tested from the US. That should give you an idea of how much latency it would add (not a huge amount with a well performing host), but a bit noticeable. You could consider them as an option too if you wanted a top notch EU based company.

Thanks for your fast reply, and sorry for the hard to understand message : I was typing on my phone.

I looked at Pressidium results. So you are saying that the 300 ms average difference (webpagetest.org results) are explained only by distance ?

If so, I’ll try them, thanks for the info. (and I’ll click your link 🙂 )

BTW, Knownhost is a rather respected host at WHT.

Garry,

I’m familiar with KnownHost and they have a quite good rating here at Review Signal too (https://reviewsignal.com/webhosting/company/13/knownhost/), but they aren’t in the same class as the managed WP providers. For a managed VPS, they look great, I have no idea how well they manage WP, that’s all.

As far as WPT results, yeah, if you look at the locations, it was VA, CO, FL, CA as the testing states. Also look at the blitz results. Despite having a higher average ping, it was incredibly consistent. If you’re really curious about latency and location, there is this cool chart http://www.verizonenterprise.com/about/network/latency/ it gives you some idea of how distance/geography affects latency. The Atlantic ocean seems to add 70-80ms naturally, on top of whatever other routing needs to happen.

If you were considering Pressidium and just worried about latency, they should be way better in Europe since their servers are based there. I would give them a try 🙂

Hi

My experience with a small orange VPS has been HORRIBLE : (

Lot of downtime and issues with no one monitoring servers or responding on time ( phone support is no 24×7 ) and you just need to create email tickets. Chat has like 30 people waiting so not an option – specially if you are on the road.

On top of that – they give you a VPS server in default configuration with no speed optimization or backup which I learnt the hard way

Site issues – lost code — oops no backup

Site slow of many days, looking google ranking — ASO said yes, we can make it master as lot of services are no installed. WHAT — why were they not installed to being with – why would you give shitty configuration server to a customer.

What server or type of account did you use ? I am trying to see of their Clementine Managed is any better than their VPS – before I learn my lesson and move on to a hopefully better hosting company

Lost too mush revenue and google rankings because of their poor service

Neal

I tested with the LEMP WordPress optimized VPS stack, not the default.

I’m also very sorry to hear about your experience. Is the support issue recent? I saw something about them having issues because they were taking over the support operations of another web host that has perhaps overloaded their team. Not sure what’s going on with that.

Pingback: Developer Tips for Writing Good WordPress Code

Pingback: Answers from the Women of WordPress - includes tips for plugins, hosts and resources - A Prettier Web

Pingback: Managed WordPress Host Kinsta Growing Rapidly, Expands in the U.S. - WP Mayor

Thanks for the awesome work!

I was wondering.

ASO’s results are great, and compared to other providers, their pricing isnt (openly) based on number of hits.

However, I see you were using the LEMP setup, is that free? Plus, is that setup compatible with cache plugin like wprocket?

I also understand LEMP setup isnt available with cpanel. Do you have experience with their NGINX optimized setup for cpanel?

And last question, if I May : will 1 higher plan with more RAM provide more speed or is it useful only if there are several websites on the VPS?

A few don’t base their pricing on hits (but many do, you are right).

The LEMP stack is free, it’s a template you can choose on your VPS. I don’t know about it’s compatibility with WP Rocket, you would need to ask them.

You are correct, no cpanel and I haven’t tested their nginx optimized cpanel. In general, just switching to Nginx alone doesn’t instantly give awesome performance. If you’re curious, you can take a look at some test results I published of different configs (apache vs nginx) https://github.com/kevinohashi/WordPressVPS/tree/master/loadtest_results Nginx with some optimization be amazingly fast, but out of the box, Apache is comparable. I’m not sure how/what the ‘optimized’ nginx configuration is actually optimized for.

Adding more ram can make some things faster (larger caches) but really it’s a case by case kind of thing. Adding more doesn’t always make it faster unless it’s the current bottleneck you’re experiencing.

Thanks for your answer Kevin.

I just talked to ASO support over live chat, and they told me something I think interested people should know :

=> About the Lemp stack :

Abdul Ghani 10:48:24 am

I’ve read good things about yoru Lemp stacks, but there are 2 versions. What are the differences please ?

Support Operator 10:49:40 am

Oh gosh, I can’t say for sure, while we do offer LEMP and the other Linux OS’s, as well as the 2 window’s OS’s, we don’t actually do support for anything except for the CentOS with cPanel servers, so we don’t have any training on the other Operating systems, I do apologize for not being able to answer your question D:

Abdul Ghani 10:50:00 am

not answering is ok

but you say there is no support ?

Support operator 10:52:26 am

Not for the servers other than the Centos with cPanel servers, we can reboot the server, and make sure it’s on, but the support for them is extremely limited, like we can’t check internal errors, such as if httpd or something like that were to crash, we wouldn’t be able to help with that on a LEMP server but could on the cPanel server’s. I totally understand wanting the LEMP server, but if you’re ordering with us, I would recommend the cPanel server, so we can help out if needed.

=> And about the Nginx “optimized” version :

Abdul Ghani 10:53:49 am

fair enouhj

enough

and what about the cpanel Nginx optimized version

does that come with complete support ?

Support operator 10:55:06 am

We do a bit more for that, but the standard Centos with cPanel are the ones we actually do the full support for. On the Apache servers, we can install Nginx as a reverse proxy, to help with server loads

Abdul Ghani 10:56:09 am

what kind of stuff isnt covered with that version?

Support operator 10:57:03 am

We wouldn’t be able to make any changes to web server, like any issues you might run into with Nginx, we could trouble shoot apache, where we wouldn’t be able to do so with Nginx. We could assist with things inside cPanel, or WHM themselves though with that.

=> I was really going for the LEMP stack, but since I don’t have the skills to run the server myself, I’ll have to do otherwise.

Good to know and glad you shared that information here. Thank you.

Pingback: How Kinsta Saved Cyber Monday for Swagway - Case Study

Pingback: Kinsta Kingpin: Interview With Kevin Ohashi

Interested in opinions…which of the higher scoring, lower priced hosts tested here best suits a small eCommerce business with very little server/hosting infrastructure knowledge? Siteground seems promising, but they don’t really play up the “managed” aspect of their hosting. My Flywheel trial was relatively painless, and they’re supportive, but costly for more than 1 site. Cloudways provided decent KB articles on setup, and how to monitor and manage server health, but their support staff weren’t very helpful about WordPress-specific issues.

I’d rather not have to fiddle with nodes, cache and cdn settings, nor do I have the time to learn about them. So I am willing to pay for truly managed hosting.

Hi Kevin, for DreamPress did you try with HHVM or OpCache?

DreamHost claims that with HHVM the load time time was 4x faster: dreamhost.com/blog/2015/05/27/dreampress-2-the-evolution-of-managed-wordpress-hosting-at-dreamhost/

If you have not tried HHVM, do you mind re-running the test with HHVM and let us know whether you see a change in performance?

Thanks in advance.

Best

I used whatever was default at the time (I don’t believe HHVM was). I will be re-running tests for 2016 in the coming year and updating then.

You guys should test kickassd.com in your next roundup. They offer managed wordpress hosting with Docker integrated into cPanel which adds huge flexibility and power. If your site starts to out grow their shared hosting than simply start dividing it up into Docker containers from within cPanel.

Hi Kevin,

thanks for this epic article. I’ve been looking for a new hosting provider for the last couple of weeks..No idea why I didn’t find your review earlier.

I still have a rather small site with around 7.000 monthly page views but it’s growing quickly. After a lot of going back and forth, I narrowed it down to either Cloudways or Pressidium.

My target audience is from the US which would suggest better speed performance with Cloudways when I select a US data center. Also, the pricing is pretty spectacular. I guess the 15$ option would be more than enough for me at the moment.

Pressidium, on the other hand, has a really cool concept and I like their style a lot. Everything is geared towards WordPress plus the support appears to be top notch. Just wrote them an email with a few questions and got a reply from the co-founder Andrew, who exceeded all expectations with his thorough answer, addressing all my concerns in great detail. Drawbacks are the potentially slower loading times for US users and the limitations in regards of monthly visits.

I have to admit that I’m stuck at this point. What would your suggestion be?

Lars,

I’m glad you liked the article. I wish I could tell you why you didn’t find it, probably a lot of other people spamming crappy affiliate links instead of real information 🙁

For 7k visitors a month, CloudWays would be more than enough, you are correct.

Pressidium does have a very different concept from CloudWays and you seemed to pick up on their value-add. Andrew is a very nice guy in my experience too.

I can’t give you an answer, but I can tell you how I think about these things.

Both have very good performance. Especially at the level of traffic you’re experiencing, I think it’s a non issue. That includes ping. Pressidium was still pretty fast to the US in my experience.

So what about price. What is your budget? What are you willing/comfortable to spend on good hosting? If both fit within those bounds, you start to look at cost scaling. CloudWays scales better on a pure price stand point. Except you got to experience some of the Pressidium support which seemingly impressed you. How much more would you be willing to pay for that?

I personally value support quality over almost everything else (assuming some minimum service standard is met). I’m willing to pay a fair bit extra for it too. Not everyone is like that. Not every business can justify it. Not every site can justify it. I tier my hosting based on what it is. Fun hackathon project? Dump it on an unmanaged VPS with the cheapest provider that works. Important client eCommerce site? Expensive, managed hosting with SLA. That’s how I think about these things, and I hope that helps you think about your choice. I don’t think between those two there is a wrong choice. But the question in your mind seems to be will you regret not paying the extra for what you think is excellent support. Good news is… you can always move later (although it can be painful sometimes to migrate).

Hey Kevin,

thanks for your extended reply. I think you’re right about the value of great support.

I just made the decision and was about to purchase a plan at Pressidium when their website didn’t response anymore. Apparently, their upstream provider is experiencing a DDoS attack which causes those downtimes.

I’ve got to admit that this alienates me a little bit. Is this something that can happen to any WordPress hosting provider or should I be worried indeed?

It can happen to anyone. How often and how well they are able to deal with it may vary based on underlying provider. But I don’t have any definitive information to provide about who is better at security.

ASO looks interesting for VPS but they apparently don’t have any phone number to call nor do they have even a simple contact form. How in the hell does a company do business without any method of communication?

In light of recent events, I wouldn’t recommend them anyways. They’ve have some major issues since I published this.

An Update from my side. I created a test account at Cloudways and their Speed is alright but the Support is just a bad joke. The people I talked to in the live chat didn’t even have a basic understanding of WordPress.

That’s why I went with Pressidium and I’m quite happy so far. Speed is good (Almost as fast as Cloudways from US Servers and faster from Europe) and the support is superb. I also like their interface a lot. It’s clean and modern and it’s not overloaded with too many options.

The only thing that’s bugging me just a tiny bit is their way of counting visits. Meaning that all the bot traffic counts as visits as well. Bot traffic is usually multiple times the amount of regular visitors so you pretty quickly get to their limits. Even though they don’t seem to be too strict when it comes to overage and won’t charge you right away, this feels a little bit restrictive.

Well, I’m glad you’re happy with your choice. I have never been a fan of this restricting by visitors, but it seems like that’s the way this niche went. I suspect it will go away in a few years as the competition continues to go on. But that’s all just speculation and commentary at this point.

Another vote for Pressidium… Support is second to none. We’ve worked with tens of managed hosts in the past 18 months and these guys are another level.

They also seem to have a focus on always improving – my sites have got faster since I first signed up, and some even feel like they are a native app as they are so damn quick!

I’m not affiliated with them in any way… just a happy customer of nearly a year.

Pingback: 2016 WPShould Hosting Review - Kinsta