We, the public, just won a battle. But the war for internet governance that actually represents the public interest is far from over. This could be a turning point or a minor blip that the entrenched interests laugh about years from now.

The big news today is that ICANN withheld consent for a change of control of the Public Interest Registry (PIR). This effectively kills the deal where Ethos Capital, a private equity firm being run by ICANN insiders, tried to buy the rights to run .ORG from The Internet Society (ISOC) for 1.135 billion dollars.

First, let's celebrate. The non profit community and the general public just had a small victory at ICANN. I am not sure the last time the public interest was actually well represented in a major ICANN decision where substantial amounts of money was at stake. This is a historic win for the public good and I couldn't be happier about that. With that said, it's time to look at what happened.

What reasons did ICANN cite for its rejection?

The decision to reject lists a multitude of reasons for why the deal shouldn't go through. It only delayed the decision after a letter from the Attorney General of California, Xavier Becerra which unequivocally stated "Given the concerns stated above, and based on the information provided, the .ORG registry and the global Internet community – of which innumerable Californians are a part – are better served if ICANN withholds approval of the proposed sale and transfer of PIR and the .ORG registry to the private equity firm Ethos Capital." (emphasis added)

The primary reasons ICANN gave consideration to according to their statement can be summarized as follows:

- For profit ownership vs non-profit ownership of PIR.

- PIR being converted from a non profit into a for profit entity.

- $360 million of debt being taken on that will need to be serviced

- Untested Stewardship Council / Why can't non profit PIR pursue new business interests

- ICANN being made responsible for handling disputes

The first three issues are directly addressed in the Becerra letter. I don't think it's a mistake that these are listed first. They are the most easy to understand and see the problem with.

The 4th and 5th issues are a bit trickier to understand. The stewardship council was Ethos Capital's way of trying to placate the non profit community by saying you will have a voice in our decision making. Believing that voice would outweigh the interests of the investors would be a mistake. Let's not mince words, Ethos are here to make money. Trusting a private equity firm to choose between the public good and profit is a trusting a fox to watch the hen house. A terrible mistake, no matter what the fox pleads.

The 5th issue is about dispute handling and public interest commitments. I am not an expert but you can read this very detailed piece by Kathy Kleinman about them. Kathy absolutely rips them apart in a way that only a lawyer, who also used to be the public policy director for PIR and has been involved in ICANN for decades can. Kathy's piece is a masterpiece of critical thinking and evidence showing us why these commitments shouldn't be taken seriously.

In conclusion, ICANN wrote "ICANN's actions are thereby in accordance with ICANN's Articles of Incorporation and Bylaws' public interest mandates, and are also aligned with how the CA-AGO explained his views of the public interest." The California AG saved .org.

What issues did ICANN not address in its rejection?

While it's important to see what the public reasoning for the rejection are, it's also important to take a moment and talk about what wasn't talked about.

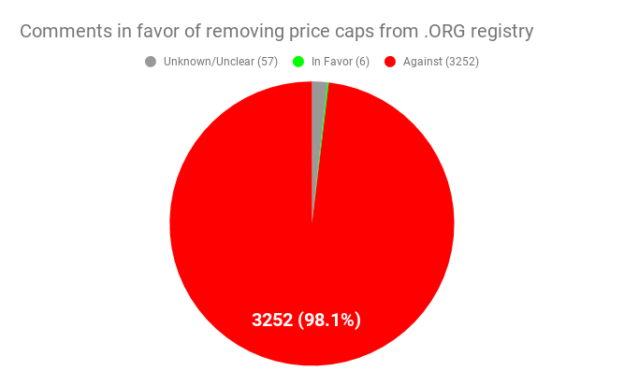

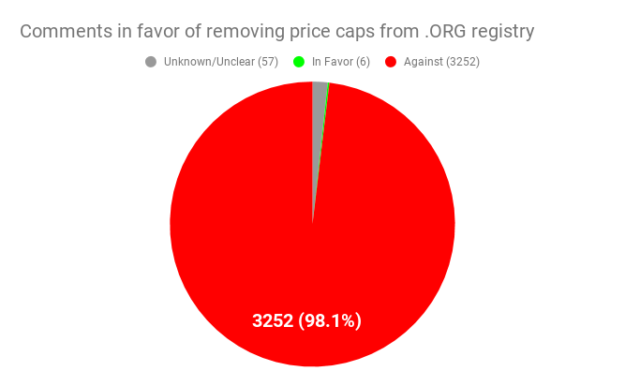

The most glaring omission is the event which precipitated this whole saga. "Proposed Renewal of .org Registry Agreement" which sounds innocuous but contained this language, "In alignment with the base registry agreement, the price cap provisions in the current .org agreement, which limited the price of registrations and allowable price increases for registrations, are removed from the .org renewal agreement." That sentence is what got me involved with this entire issue after reading about it on Hacker News. This was one of the largest public comments ICANN ever received as far as I know (only later to be surpassed by the .com renewal public comment which tripled its opposition for the same issue on a different registry). What did the public tell ICANN? No. We are against this. I analyzed the comments and wrote about it in The Case for Regulatory Capture at ICANN. That article was ultimately cited in the Becerra letter because in over 3000 comments, I could only find six (6!) in favor. With many of them having ties to registry operators.

Some might argue that the events are unrelated. Specifically ISOC and Ethos Capital would make those arguments. Whether one could prove they were or were not is another matter, but the removal of price caps opened the door to the opportunity for higher levels of rent seeking. ICANN's latest mantra of 'we are not a price regulator' is inline with the legacy TLD registry operators' interests, which brings us to the second major problem and omission.

The other major issue is ICANN mentions 'supporting the multistakeholder model' but doesn't mention the complete absence of it during this whole process. The registry agreements for .org and now .com were pre-negotiated by ICANN staff, without discussion or input from the stakeholders. They were approved rapidly with no changes despite overwhelming opposition (98%+ in both cases). The .com agreement was passed within a day during a pandemic after being given a staff report which said "The comments about the proposed changes to

the maximum allowable wholesale price for .COM registry services were nearly unanimous in

voicing disagreement or concern though they provided a variety of reasons why they are

against the change." ICANN doesn't care about the multistakeholder model other than as a token way to try to appear like a responsible steward of the DNS. What kind of multistakeholder model can in good faith take nearly unanimous disagreement and go against it. Regulatory capture is still alive and well at ICANN and ICANN doesn't seem interested in addressing it.

What's next?

What I've been seeing in the lead up to this decision is a public campaign by people affiliated with registries (VeriSign and PIR) and one message (1, 2, 3) they are trying to push is/was giving up control to California AG is dangerous for ICANN in the long run. Let's be clear why it's dangerous: it means real oversight and accountability to ICANN's public charter: "ICANN must operate in a manner consistent with these Bylaws for the benefit of the Internet community as a whole."

ICANN spent years trying to free itself from the US Government and argued it would be a responsible self-governing entity accountable to the multistakeholder process and represent the public interest for the benefit of everyone. It sounds nice, it was an aspirational goal. When you look at the names who lobbied for it in front of Congress - Steve Del Bianco and Jonathan Zuck, they were VeriSign lobbyists. Steve still is. Today, they are the the policy chair of the Commercial and Business Users' constituency and Vice Policy Chair of the At-Large Advisory Committee (ALAC) respectively. Let's pause for a moment and appreciate that two active/former lobbyists for the most profitable registry operator are policy chairs for groups including a group that is supposed to represent the individual internet user. Jonathan Zuck's organization did $363,202 in business with his former organization ACT which is funded by VeriSign in 2018 according to his latest 990 filing.

So let's focus on where this push is coming from and why. A lack of oversight lets corporate interests get pushed without being checked by the public interest. ICANN easily ignores the public interest as we've seen time and time again. It's become a near carte blanche for registry interests.

The California AG asserting authority over ICANN has been the first road block in years. Becerra just announced to the ICANN world that it crossed over a line that has finally brought scrutiny on them. Scrutiny could be very bad for a .com monopoly which is the only reason VeriSign exists and makes up the majority of their value. So expect to see an immense amount of lobbying coming from them. Maybe it looks like a think of the children argument? Maybe it looks like ICANN needs independence? Maybe it will look like something else. But it's coming. VeriSign has billions at stake and I am sure they will make every effort to protect their golden goose.

In Conclusion

We're in uncharted territory at this point. The .ORG scandal was definitely the hill to die on for people who want to see a more accountable ICANN which represents the public interest. The cartoon villain plot of a former CEO advising a company into buying the non profit registry to exploit it for private investors at the expense of the world's do-gooders was perfect. It's a symptom of the capture at ICANN that they thought they could even get away with it.

My hope is that knowing that the California AG has oversight of ICANN will force some serious reconsideration of their behavior and decision making. My fear is that this was a one time egregious violation of the public good and that minor trespasses will go unchecked and we gradually build up to where we were yesterday before this decision.

Thank Yous

I started this journey a year ago from reading a headline and I've connected with a lot of amazing folks who have helped pushed in the direction that led us to today. So as an acknowledgement to them, I wanted to say thank you.

My lawyers and friends who helped review things and advise me, you know who you are.

Kieran McCarthy and Timothy Lee at The Register and Ars Technica respectively for being the first journalists who listened and continued to cover the issue.

Richard Kirkendall, NameCheap's CEO, who has continually pushed these issues and brings attention to it to his large audience of customers.

Nat Cohen and Zak Muscovich at the ICA who have been involved on the issue and were often the first people dissenting in a thoughtful manner who tirelessly participate in the convoluted ICANN process.

George Kirikos who called the private equity play from the very beginning and was banned from the GNSO working groups and voluntarily left ALAC after calling out it's capture and continues to be a voice of reason and outrage in the ICANN community. (Edited: banned from GNSO and left ALAC)

Mitch Stoltz and the EFF for their active and continued effort to #SaveDotOrg.

Everyone else involved in the #SaveDotOrg campaign who helped make this a reality.

You all made a difference today and I couldn't be prouder to have been on the same team.

WordPress & WooCommerce Hosting Performance Benchmarks 2021

WordPress & WooCommerce Hosting Performance Benchmarks 2021 WooCommerce Hosting Performance Benchmarks 2020

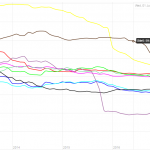

WooCommerce Hosting Performance Benchmarks 2020 WordPress Hosting Performance Benchmarks (2020)

WordPress Hosting Performance Benchmarks (2020) The Case for Regulatory Capture at ICANN

The Case for Regulatory Capture at ICANN WordPress Hosting – Does Price Give Better Performance?

WordPress Hosting – Does Price Give Better Performance? Hostinger Review – 0 Stars for Lack of Ethics

Hostinger Review – 0 Stars for Lack of Ethics The Sinking of Site5 – Tracking EIG Brands Post Acquisition

The Sinking of Site5 – Tracking EIG Brands Post Acquisition Dirty, Slimy, Shady Secrets of the Web Hosting Review (Under)World – Episode 1

Dirty, Slimy, Shady Secrets of the Web Hosting Review (Under)World – Episode 1 Free Web Hosting Offers for Startups

Free Web Hosting Offers for Startups