Category Archives: Review Signal News

WordPress Hosting Performance Benchmarks (2018)

The benchmarks are available here.

Black Friday and Cyber Monday Web Hosting Deals (2017)

Missing a deal? Please Contact Us

| Company | Offer | Restrictions | Coupon | Start | End |

| A2 Hosting | 67% off Shared | HUGE67 | Nov 23 | Nov 27 | |

| A2 Hosting |

50% off Managed / Core VPS

|

CMVPS | Nov 23 | Nov 27 | |

| A2 Hosting | 40% off Reseller | RSL40 | Nov 23 | Nov 27 | |

| BlueHost | $2.65/month | Nov 24 | Nov 27 | ||

| CloudWays | $150 Hosting Credit | Credit applied at 10% monthly bill until credit expires | BF150 | Nov 21 | Dec 11 |

| FlyWheel | 3 Months Free | Annual subscription | flyday17 | Nov 20 | Nov 28 |

| GreenGeeks | 70% off shared hosting | Nov 24 | Nov 27 | ||

| HostGator | 80% off | ||||

| InMotion Hosting | $2.95/month | Nov 24 | |||

| Kickassd | 6 months free | annual plan | 50FRIDAY | Nov 21 | Nov 24 |

| Kinsta | 30% off first month | Discount applied with support ticket (put in coupon code) | ReviewsignalBF2017 | Nov 24 | Nov 27 |

| LiquidWeb |

Dedicated Servers 50% off for 3 months

|

BF17PROMO | Nov 30 | ||

| LiquidWeb |

VPS 50% off for 3 months

|

BF17PROMO | Nov 30 | ||

| MediaTemple |

40% off Annual Plans

|

Nov 24 | Nov 28 | ||

| Nestify | $49/year for Shared Personal Plan (Normally $19.99/month) | New customers | REVIEWSIGNAL2017 | Nov 23 | Nov 30 |

| Nestify | $199/year for VPS-Lite Plan (Normally $79/month) | New customers | REVIEWSIGNAL2017 | Nov 23 | Nov 30 |

| Nexcess |

80% off First Month

|

New Customers Only | BF17 | Nov 24 | Nov 27 |

| SiteGround | 70% off Shared | Nov 24 | Nov 27 | ||

| WPEngine |

35% off first payment

|

cyberwpe35 | Nov 22 | Nov 30 | |

| WPX Hosting | Double Sites, Bandwidth, Disk Space |

Two Year Purchase

|

Nov 22 | Nov 29 | |

| WPX Hosting | Three months free |

Annual Subscription

|

Nov 22 | Nov 29 | |

| WPX Hosting | $1 First Month |

$1 for the first month

|

Nov 22 | Nov 29 |

Review Signal Celebrates 5 Years of Providing Honest Web Hosting Reviews

Review Signal launched on September 25, 2012. TechCrunch wrote Web Hosting Reviews are a Cesspool. Review Signal Wants to Fix That. It was a huge moment because it was roughly two years of development and one Master's thesis worth of effort. The author wrote a pretty accurate assessment of the state of web hosting reviews and the challenges facing Review Signal. Well, Review Signal has managed to last. It added Amazon AWS along with a bunch of other hosting companies. It has kept its independence and only made one algorithm update. The goal of expansion beyond hosting hasn't happened. But Review Signal has managed to become the defacto number one source for WordPress Hosting Reviews for anyone who cares about performance with our annual WordPress Hosting Performance Benchmarks.

Looking at the past five years, I've wondered what sort of impact Review Signal has made. One of the saddest impacts has been doing post-mortem write ups about once great companies. The original was The Rise and Fall of A Small Orange. It was a very personal analysis because they were a company that was ranked #1 on Review Signal when it launched. I had to personally talk the CEO into creating an affiliate program specifically for Review Signal because he was against web hosting review sites. He was against them for the same reason I was, because they were just pay-to-play junk masquerading as 'reviews.' I had to convince him Review Signal was different and if something different and honest were going to survive financially, I had to at least make a little money from referrals or I couldn't afford to continue to operate. Ultimately, based on the numbers I saw, it's possible Review Signal could have generated more than 10% of their business which saw them grow until they were acquired by Endurance International Group (EIG) and fall off a cliff in terms of service. Sadly, it wasn't the only company to follow this story, The Sinking of Site5 was the follow up.

The effort for honest reviews spawned one site which copied the same idea of using Twitter data to power it. They didn't do affiliate links and ultimately sold out to a less than savory web hosting review site which taunts some EIG brands near the top of its rankings. That was another unfortunate post mortem I had to write.

At least posting about some of the dirty, slimy, shady secrets of the web hosting review world stopped or stemmed some of the bad behavior - right?

I guess not. It's still just another Tuesday when someone insults what it should mean to run a review site.

But to end on a positive note, I'm quite proud Review Signal has managed to stick around. I've disclosed a lot about it this year on a big interview on Indie Hackers. It may not have changed the world but it has definitely helped thousands of people make more informed and hopefully better decisions in a market plagued with lies. So for, I am proud and here's to the next five years - I hope Review Signal helps more people and makes a bigger impact on the industry as a whole.

Uncovering the Rose Hosting Spam Network on Quora

Welcome back to Dirty, Slimy, Shady Secrets of the Web Hosting Review (Under) World - Episode 3! Read Episode 1 | Episode 2

Today's post features Rose Hosting. Who I refuse to link to because their whole business model seems to involve comment spamming this blog and other sources of information. What started with a simple spam comment sent me down a rabbit hole I wasn't prepared for and shed light on a fairly large spam operation that spanned multiple sites, but my primary focus became Quora with a secondary focus on the web hosting review sites also being manipulated.

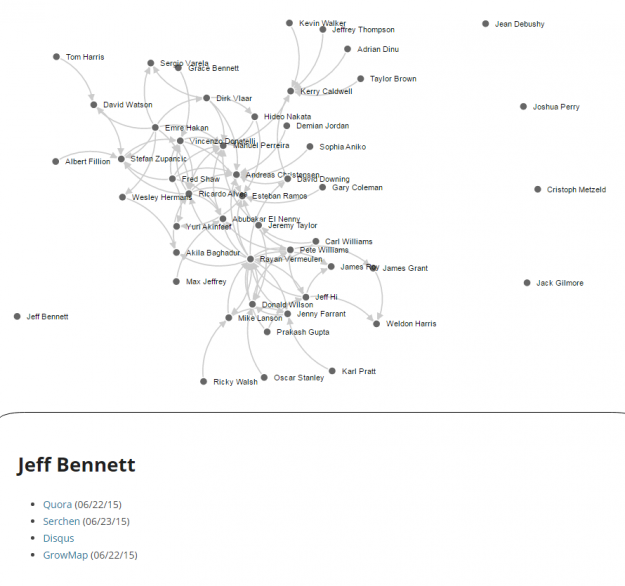

Visualization of Rose Hosting Quora Spam Network. An interactive version is available at the end of the article.

The Beginning

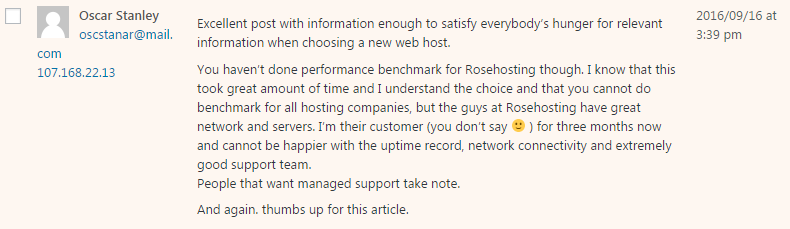

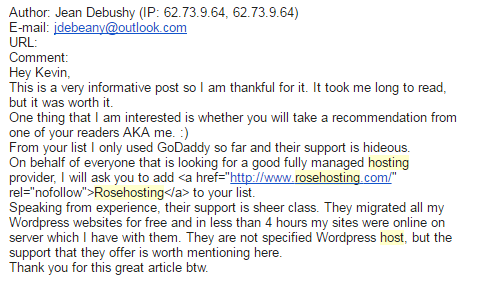

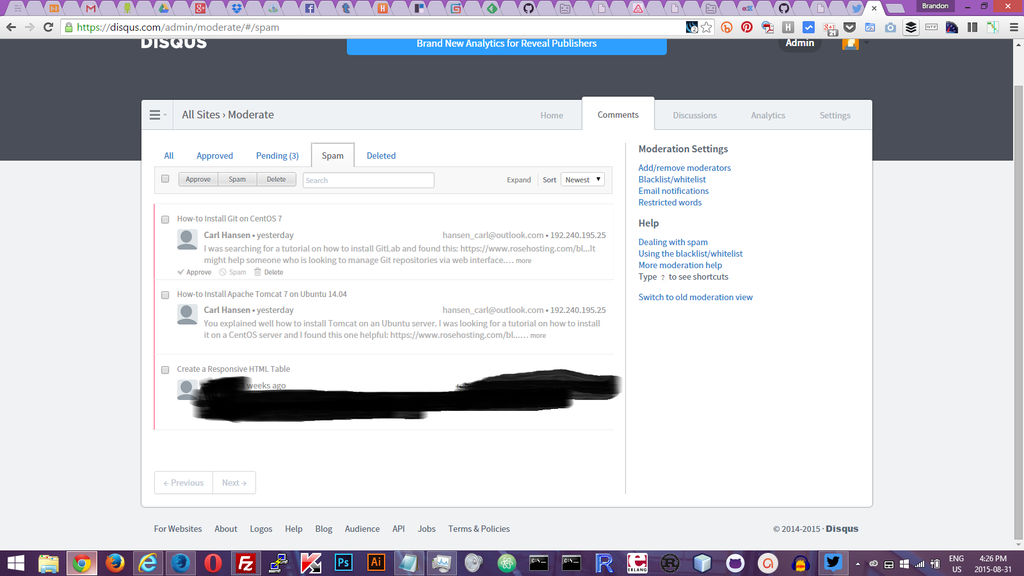

It started with a simple spam comment.

The poster tries to compliment the post and then drops in a RoseHosting mention and praises it.

But wait, there's an IP address! Looks like they made a mistake this time.

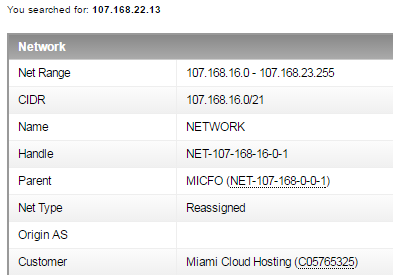

So Miami Cloud Hosting is who owns the IP space that this comment came from. Let's see what comes up when I ping rosehosting.com

![]()

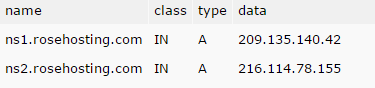

If you go to that IP, rosehosting.com shows up. So it's correct. Also if you look at their DNS:

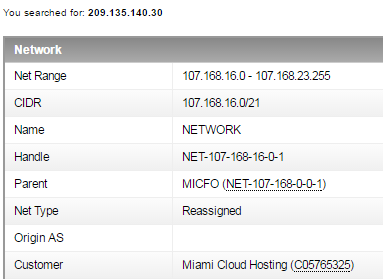

So we're 12 IPs away on that A record. Let's check out that IP that actually responded on ARIN.

Bingo. Same Miami Cloud Hosting.

So fakeish looking name, an email with zero google search results and coming from the same IP space on a the cloud hosting provider that hosts RoseHosting. Pretty damning, but unsurprising to see some astroturfing, many of the bigger players just rely on affiliates to do it for them and look the other way.

But I'm not one to accept shitty behavior in this business and just look the other way.

Digging Deeper

Let's see how many more I can dig up. I recognize the Rose Hosting name and know they've spammed me in the past.

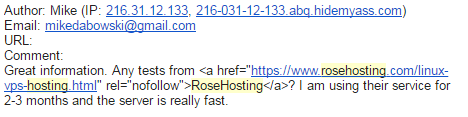

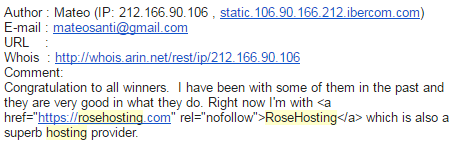

The pattern seems to be emails with nothing associated with them on google. There is a protected twitter account with the same username as Pablo, but that's about it.

Mike uses HideMyAss, a VPN service designed to hide identities. VPNs/anonymity have a lot of value, they also happen to be abused by spammers a lot. This pattern looks nefarious.

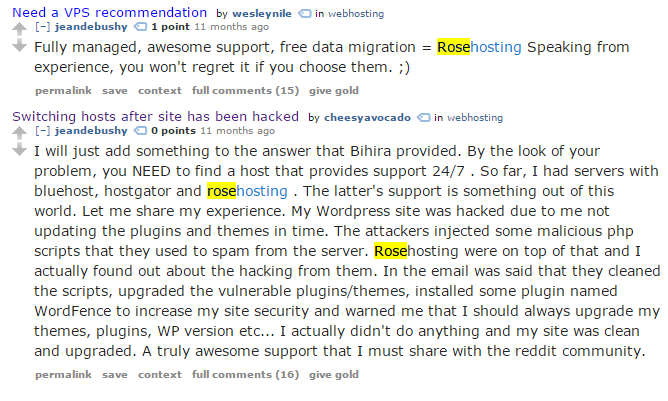

Jean's comment follows the original Oscar comment's template: compliment, rose host spam, compliment.

They all added in HTML with the rel="nofollow" because they probably realized Google can easily see comment spam and cracked down on it. Putting a nofollow link is supposed to preserve your SEO value by not associating it as a spam link (because it's telling Google not to follow it). Why are these supposed customers adding SEO tactics to their comments and trying to hide their identities?

The Boss Man

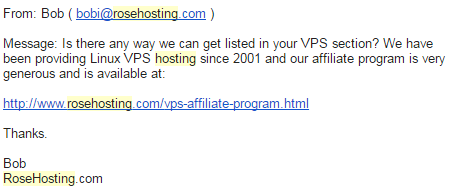

I also got this email from Bob, who I assume is the owner based on what's listed publicly and the interviews he's done on at least one other review site which I don't trust a bit, and won't link to either.

But it's all class, I want to get listed and pay a lot.

So at best they are a 'subtle' please promote me for money kind of web hosting company (which almost every host will do). At worst, they are comment spamming and potentially astroturfing/sockpuppeting web host.

Searching For More

I searched WebHostingTalk, the largest web hosting forum that has run forever and has over 9 million posts.

Just about everyone is talked about here. They have a company account that constantly posts ads. But how is it that in 14 years there are only 2 reviews and most of the threads are asking 'who?' Yet somehow, my blog is getting hordes of accounts recommending them. Another red flag.

Did they learn their lesson on WHT when an account got questioned about sounding like a shill? So the largest forum with 9,000,000 posts has basically nothing about them.

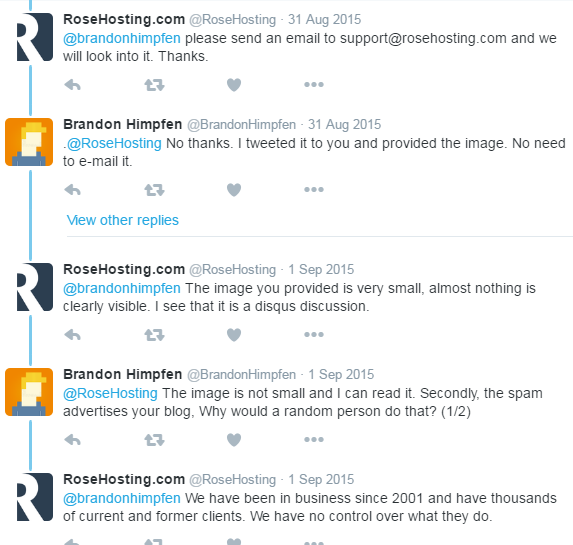

I kept searching and stumbled upon this gem on Twitter

.@RoseHosting Stop spamming my website with your tutorials; leaving comments about my tutorials and then linking to your website's tutorial.

— Brandon Himpfen (@BrandonHimpfen) August 30, 2015

I sense a pattern. Those crazy customers of ours who link to git and tomcat installation tutorials. Carl had a bit of a spamming spree according to Google.

Let's keep digging.

Sockpuppets and Patterns

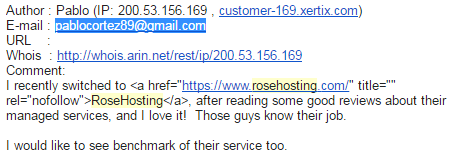

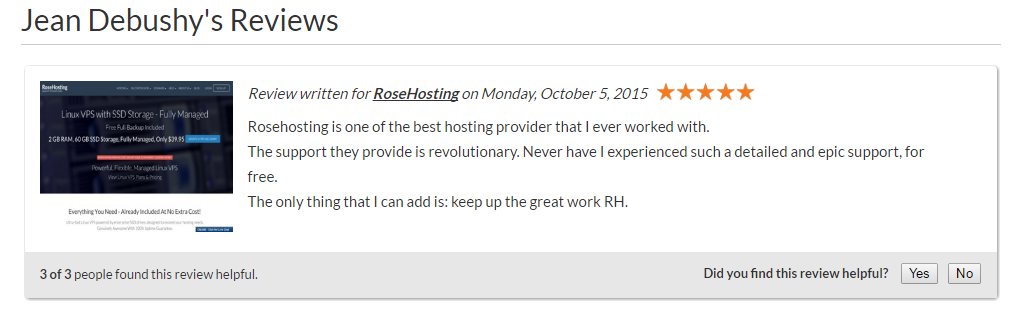

Looks like I found Jean Debushy!

And again.

And again. Deep linking their ubuntu VPS on an ubuntu tutorial too, nice SEO tactic.

It's not a good spam campaign without hitting Quora!

So this name exists solely to promote RoseHosting and it all seemed to happen in October 2015. That's suspicious to say the least.

At this point it became clear that the sockpuppeting is more organized than I originally thought.

Organized Sockpuppets

I started to search the other names I had been spammed from and easily found more bad behavior.

Oscar is alive and well it seems on Disqus. and DiscoverCloud.

The Smoking Gun

Quora was the gold mine for uncovering this spam network. Once I found a couple accounts on Quora, I could go through their history and see who upvoted their posts. It would be practical if you were running a spam network to have many accounts upvoting one another to give yourself more visibility. More upvotes, more traffic, easier for me to track it all down.

I discovered 51 accounts connected to RoseHosting and mapped out how they connected to one another. I took those same names and searched for their re-use across other sites. 10 showed up on Serchen, 3 on HostReview, 6 on DiscoverCloud, 6 on HostAdvice, 3 on TrustPilot, 1 on Reviews.co.uk - all industry review sites being manipulated by these same spam accounts. I also discovered 11 more accounts connected to various review sites and comment spam.

Rose Hosting Quora Spam Network

This graph charts the connections (upvotes) of RoseHosting associated Quora accounts. If you hover over a name it links to the Quora details and any other related content spamming like review sites.

Aftermath

I tried for months to reach out to Quora and have never heard a word from them. I did notice when I last checked (March 28, 2017) that at least some of the accounts have been banned. Maybe someone actually read my email and just didn't have the time to respond.

I have reached out to the web hosting review sites and will update as I hear back from them. The only company that did respond and acknowledged the issue was HostAdvice (not to be confused with HostingAdvice which steals Review Signal content to mislead its visitors).

Sources

Full Data Table Available on Google Docs

Bonus

Thanks RoseHosting for having the decency to make sure you spammed this article as well. I am guessing your spammers don't understand irony. Or possibly the English language.

A new comment on the post "Uncovering the Rose Hosting Spam Network on Quora" is waiting for your approval

https://reviewsignal.com/blog/2017/03/31/uncovering-the- rose-hosting-spam-network-on- quora/ Author: Merritt George (IP: 75.86.176.9, cpe-75-86-176-9.wi.res.rr.com)

Email: merritt.george@gmail.com

URL:

Comment:

That's a great article! There sure are interesting parts of web hosting that people don't know about.So hey I wanted to know if you do reviews on new sites? I was looking around and noticed that my current webhost, <a href="https://www.rosehosting.

com/" rel="nofollow">Rose Hosting</a> wasn't listed and that's a shame! In a sea of companies with no scruples, they've stood out to me as a solid company that doesn't resort to shady tactics, delivers quality support, and has great uptime. Would love to see a benchmark!

Bonus: Fake Review Screenshots

SliceHost Removed from Review Signal

SliceHost was acquired by RackSpace in 2008. The SliceHost brand hasn't operated in many years, it was time to remove it from our listings.

Goodbye HostingReviews.io, I Will Miss You

It's strange writing a positive article about a competitor. This industry is so full of shit, that it feels weird to be writing this. But if we don't recognize the good as well as the bad, what's the point?

In order to bring you more accurate web hosting reviews, HostingReviews.io is now merged with HostingFacts.com and soon to be redirected to HostingFacts.com-HostingReviews.io popup notice

HostingReviews.io was a project created by Steven Gliebe. It was basically a manually done version of Review Signal. He went through by hand categorizing tweets and displaying data about hosting company. Besides the automated vs manual approach, the only other big difference was he didn't use affiliate links. It was bought out by HostingFacts.com last year sometime and left alone.

I'm truly saddened because it's disappearing at some point 'soon.' The only real competitor whose data I trusted to compare myself against. So I thought I would take the opportunity to write about my favorite competitor.

I am consciously not linking to either in this article because HostingFacts.com, who purchased HostingReviews.io, has HostGator listed as their #1 host in 2017 who are rated terribly by both Review Signal (42%) and HostingReviews.io (9%). So whatever their methodology purports to be, it's so drastically out of line with the data Steven and I have meticulously collected for years that I don't trust it one bit. Not to mention their misleading and bizarre rankings showing BlueHost #4 and in the footer recommending A Small Orange as the #4 rated company. In their actual review, they recommend A Small Orange, I guess they missed The Rise and Fall of A Small Orange and the fact that HostingReviews.io (March 2017) has ASO at 27%.

It would be easy to be upset that someone copied the same idea, but the reality is, it's quite helpful. Steven is a completely different person, with different interests, values and techniques. He also didn't put any affiliate links which many people believe are inherently corrupt. But our results for the most part, were very much the same. So the whole idea that affiliate links corrupts Review Signal rankings, I could pretty confidently throw out the door.

I just want to clarify that I had actually built most of the site before seeing Review Signal. I probably wouldn't have started if I knew about yours first. We came up with the same idea independently. They are similar but nothing was copied. I was a bit disappointed to find out that I wasn't first but later happy, after chatting with you and seeing that we were kind of the Two Musketeers of web hosting reviews.

- Steven Gliebe

I decided to actually look at how close we really were using old archive.org data from Jan 2015 when his site was still being actively updated a lot.

Comparing HostingReviews.io vs Review Signal Ratings (Jan 2015). I've only included companies that both sites covered for comparison's sake.

| Company | HR Rating | Review Signal Rating | Rating Difference | HR Rank | RS Rank | Rank Difference |

| Flywheel | 97 | 93 | -4 | 1 | 1 | 0 |

| Pagely | 81 | 56 | -25 | 2 | 18 | -16 |

| SiteGround | 79 | 74 | -5 | 3 | 4 | -1 |

| WiredTree | 75 | 67 | -8 | 4 | 12 | -8 |

| A Small Orange | 74 | 77 | 3 | 5 | 2 | 3 |

| Linode | 72 | 74 | 2 | 6 | 5 | 1 |

| WP Engine | 72 | 74 | 2 | 7 | 6 | 1 |

| LiquidWeb | 70 | 70 | 0 | 8 | 8 | 0 |

| DigitalOcean | 66 | 75 | 9 | 9 | 3 | 6 |

| MidPhase | 61 | 59 | -2 | 10 | 16 | -6 |

| HostDime | 60 | 60 | 0 | 11 | 14 | -3 |

| Servint | 59 | 60 | 1 | 12 | 15 | -3 |

| Amazon Web Services | 55 | 67 | 12 | 13 | 13 | 0 |

| SoftLayer | 50 | 70 | 20 | 14 | 9 | 5 |

| DreamHost | 49 | 55 | 6 | 15 | 19 | -4 |

| Synthesis | 47 | 74 | 27 | 16 | 7 | 9 |

| Microsoft Azure | 43 | 70 | 27 | 17 | 10 | 7 |

| InMotion Hosting | 43 | 52 | 9 | 18 | 20 | -2 |

| WestHost | 43 | 51 | 8 | 19 | 21 | -2 |

| Rackspace | 40 | 69 | 29 | 20 | 11 | 9 |

| Media Temple | 35 | 58 | 23 | 21 | 17 | 4 |

| GoDaddy | 30 | 45 | 15 | 22 | 25 | -3 |

| HostMonster | 25 | 42 | 17 | 23 | 29 | -6 |

| Bluehost | 22 | 46 | 24 | 24 | 24 | 0 |

| Just Host | 22 | 39 | 17 | 25 | 30 | -5 |

| Netfirms | 21 | 44 | 23 | 26 | 26 | 0 |

| Hostway | 18 | 44 | 26 | 27 | 27 | 0 |

| iPage | 16 | 44 | 28 | 28 | 28 | 0 |

| HostGator | 13 | 49 | 36 | 29 | 22 | 7 |

| Lunarpages | 11 | 49 | 38 | 30 | 23 | 7 |

| Mochahost | 10 | 20 | 10 | 31 | 34 | -3 |

| 1&1 | 6 | 36 | 30 | 32 | 32 | 0 |

| Verio | 5 | 35 | 30 | 33 | 33 | 0 |

| IX Web Hosting | 0 | 38 | 38 | 34 | 31 | 3 |

The biggest difference is Pagely. I'm not sure why we're so different, but it could be a few factors: small sample size (HR had 42 reviews vs RS having 291), time frame (Review Signal has been collecting data on companies as early as 2011) or perhaps categorization methodology.

To calculate the actual ratings, we both used the same simple formula of % positive reviews (Review Signal has since changed it's methodology to decrease the value of older views). There is a lot greater difference there than between ranking order. This could also be a sampling or categorization issue, but the rankings actually were a lot closer than rating numbers especially at the bottom. The biggest differences were about Pagely, WiredTree, WebSynthesis, Azure, RackSpace, HostGator, and LunarPages. Review Signal had most of those companies higher than HostingReviews with the exceptions of Pagely and WiredTree. WiredTree the actual % difference isn't that high, it looks more distorted because of how many companies were ranked in that neighborhood. Pagely still remains the only concerning discrepancy, some of which could be corrected by using a different rating algorithm to compensate for small sample sizes (Wilson Confidence Interval). If you use a Wilson Confidence Interval with 95% confidence, Pagely would have 67% which makes the difference only 11%. Something still is off, but I'm not sure what. Towards the bottom, HostingReviews had companies with a lot lower ratings in general. I'm not sure why that is, but I'm not sure that it concerns me that greatly if a company is at 20 or 40%, that's pretty terrible through any lens.

The Wilson Confidence Interval is something I'm a big fan of, but the trouble is explaining it. It's not exactly intuitive and most users won't understand. To get around that problem here at Review Signal, I don't list companies with small sample sizes. I think it's unfair to small companies because those companies will always have lower scores using a Wilson Confidence Interval.

I always thought if you were going to list low data companies, you would have to use it for the ratings to be meaningful. So I went ahead and applied it to HostingReviews since they list low data companies.

| Company | HR Rating | HR Wilson Score | RS Rating | Wilson HR Rank | RS Rank | Rank Difference |

| Flywheel | 97 | 0.903664487437776 | 93 | 1 | 1 | 0 |

| SiteGround | 79 | 0.725707872732548 | 74 | 2 | 4 | -2 |

| Linode | 72 | 0.667820465467272 | 74 | 3 | 5 | -2 |

| Pagely | 81 | 0.667522785017387 | 56 | 4 | 18 | -14 |

| A Small Orange | 74 | 0.665222315806623 | 77 | 5 | 2 | 3 |

| WP Engine | 72 | 0.664495409977001 | 74 | 6 | 6 | 0 |

| DigitalOcean | 66 | 0.632643582791056 | 75 | 7 | 3 | 4 |

| WiredTree | 75 | 0.62426879138105 | 67 | 8 | 12 | -4 |

| LiquidWeb | 70 | 0.591607758450507 | 70 | 9 | 8 | 1 |

| Amazon Web Services | 55 | 0.50771491300961 | 67 | 10 | 13 | -3 |

| DreamHost | 49 | 0.440663157139283 | 55 | 11 | 19 | -8 |

| Servint | 59 | 0.410929374988646 | 60 | 12 | 14 | -2 |

| SoftLayer | 50 | 0.387468960047243 | 70 | 13 | 9 | 4 |

| MidPhase | 61 | 0.3851843256587 | 59 | 14 | 16 | -2 |

| Microsoft Azure | 43 | 0.379726217475451 | 70 | 15 | 10 | 5 |

| HostDime | 60 | 0.357464427565077 | 60 | 16 | 15 | 1 |

| Rackspace | 40 | 0.356282970938665 | 69 | 17 | 11 | 6 |

| Synthesis | 47 | 0.317886056933924 | 74 | 18 | 7 | 11 |

| Media Temple | 35 | 0.305726756042135 | 58 | 19 | 17 | 2 |

| GoDaddy | 30 | 0.277380620794128 | 45 | 20 | 25 | -5 |

| InMotion Hosting | 43 | 0.252456868216651 | 52 | 21 | 20 | 1 |

| WestHost | 43 | 0.214851925523797 | 51 | 22 | 21 | 1 |

| Bluehost | 22 | 0.193489653693868 | 46 | 23 | 24 | -1 |

| Just Host | 22 | 0.130232286167726 | 39 | 24 | 30 | -6 |

| HostGator | 13 | 0.110124578122674 | 49 | 25 | 22 | 3 |

| iPage | 16 | 0.106548464670946 | 44 | 26 | 26 | 0 |

| Netfirms | 21 | 0.1063667334132 | 44 | 27 | 27 | 0 |

| Hostway | 18 | 0.085839112937093 | 44 | 28 | 28 | 0 |

| Lunarpages | 11 | 0.056357713906061 | 49 | 29 | 23 | 6 |

| HostMonster | 25 | 0.045586062644636 | 42 | 30 | 29 | 1 |

| 1&1 | 6 | 0.042907593743725 | 36 | 31 | 32 | -1 |

| Mochahost | 10 | 0.017875749515721 | 20 | 32 | 34 | -2 |

| Verio | 5 | 0.008564782830854 | 35 | 33 | 33 | 0 |

| IX Web Hosting | 0 | 0 | 38 | 34 | 31 | 3 |

This actually made the ranked order between companies even closer between Review Signal and HostingReviews. Pagely and WebSynthesis are still the two major outliers which suggests we have a more fundamental problem between the two sites and how we've measured those companies. But overall, the ranks got closer together, the original being off by a total of 124 (sum of how far off each rank was from one another) and Wilson rank being 104 which is 16% closer together. A win for the Wilson Confidence Interval and sample sizing issues!

Bonus: HostingReviews with Wilson Confidence Interval vs Original Rating/Ranking

| Company | Rating | Reviews | Wilson Score | Rating Difference | Rank | Wilson Rank | Difference |

| Flywheel | 97 | 76 | 0.903664487437776 | 7 | 2 | 1 | 1 |

| Kinsta | 100 | 13 | 0.771898156944708 | 23 | 1 | 2 | -1 |

| SiteGround | 79 | 185 | 0.725707872732548 | 6 | 5 | 3 | 2 |

| Pantheon | 84 | 49 | 0.713390268477418 | 13 | 3 | 4 | -1 |

| Linode | 72 | 313 | 0.667820465467272 | 5 | 9 | 5 | 4 |

| Pagely | 81 | 42 | 0.667522785017387 | 14 | 4 | 6 | -2 |

| A Small Orange | 74 | 153 | 0.665222315806623 | 7 | 7 | 7 | 0 |

| WP Engine | 72 | 278 | 0.664495409977001 | 6 | 10 | 8 | 2 |

| DigitalOcean | 66 | 1193 | 0.632643582791056 | 3 | 13 | 9 | 4 |

| WiredTree | 75 | 57 | 0.62426879138105 | 13 | 6 | 10 | -4 |

| Google Cloud Platform | 70 | 101 | 0.604645970406924 | 10 | 11 | 11 | 0 |

| LiquidWeb | 70 | 79 | 0.591607758450507 | 11 | 12 | 12 | 0 |

| Vultr | 73 | 33 | 0.560664188794383 | 17 | 8 | 13 | -5 |

| Amazon Web Services | 55 | 537 | 0.50771491300961 | 4 | 18 | 14 | 4 |

| DreamHost | 49 | 389 | 0.440663157139283 | 5 | 21 | 15 | 6 |

| GreenGeeks | 59 | 39 | 0.434429655157957 | 16 | 16 | 16 | 0 |

| Servint | 59 | 29 | 0.410929374988646 | 18 | 17 | 17 | 0 |

| SoftLayer | 50 | 72 | 0.387468960047243 | 11 | 19 | 18 | 1 |

| Site5 | 49 | 83 | 0.385299666256405 | 10 | 22 | 19 | 3 |

| MidPhase | 61 | 18 | 0.3851843256587 | 22 | 14 | 20 | -6 |

| Microsoft Azure | 43 | 358 | 0.379726217475451 | 5 | 24 | 21 | 3 |

| HostDime | 60 | 15 | 0.357464427565077 | 24 | 15 | 22 | -7 |

| Rackspace | 40 | 461 | 0.356282970938665 | 4 | 28 | 23 | 5 |

| Synthesis | 47 | 36 | 0.317886056933924 | 15 | 23 | 24 | -1 |

| Media Temple | 35 | 416 | 0.305726756042135 | 4 | 30 | 25 | 5 |

| Arvixe | 41 | 59 | 0.293772727671168 | 12 | 27 | 26 | 1 |

| GoDaddy | 30 | 1505 | 0.277380620794128 | 2 | 32 | 27 | 5 |

| InMotion Hosting | 43 | 23 | 0.252456868216651 | 18 | 25 | 28 | -3 |

| Web Hosting Hub | 38 | 34 | 0.237049871468482 | 14 | 29 | 29 | 0 |

| WebHostingBuzz | 50 | 8 | 0.215212526824442 | 28 | 20 | 30 | -10 |

| WestHost | 43 | 14 | 0.214851925523797 | 22 | 26 | 31 | -5 |

| Bluehost | 22 | 853 | 0.193489653693868 | 3 | 34 | 32 | 2 |

| Just Host | 22 | 54 | 0.130232286167726 | 9 | 35 | 33 | 2 |

| HostGator | 13 | 953 | 0.110124578122674 | 2 | 41 | 34 | 7 |

| iPage | 16 | 128 | 0.106548464670946 | 5 | 39 | 35 | 4 |

| Netfirms | 21 | 34 | 0.1063667334132 | 10 | 36 | 36 | 0 |

| Pressable | 19 | 43 | 0.100236618545274 | 9 | 37 | 37 | 0 |

| Hostway | 18 | 34 | 0.085839112937093 | 9 | 38 | 38 | 0 |

| Fasthosts | 12 | 179 | 0.080208937918757 | 4 | 42 | 39 | 3 |

| HostPapa | 14 | 72 | 0.078040723708843 | 6 | 40 | 40 | 0 |

| Globat | 33 | 3 | 0.060406929099298 | 27 | 31 | 41 | -10 |

| Lunarpages | 11 | 71 | 0.056357713906061 | 5 | 43 | 42 | 1 |

| HostMonster | 25 | 4 | 0.045586062644636 | 20 | 33 | 43 | -10 |

| 1&1 | 6 | 540 | 0.042907593743725 | 2 | 46 | 44 | 2 |

| Mochahost | 10 | 10 | 0.017875749515721 | 8 | 44 | 45 | -1 |

| JaguarPC | 10 | 10 | 0.017875749515721 | 8 | 45 | 46 | -1 |

| Verio | 5 | 19 | 0.008564782830854 | 4 | 47 | 47 | 0 |

| IPOWER | 5 | 19 | 0.008564782830854 | 4 | 48 | 48 | 0 |

| IX Web Hosting | 0 | 45 | 0 | 0 | 49 | 49 | 0 |

| PowWeb | 0 | 10 | 0 | 0 | 50 | 50 | 0 |

| MyHosting | 0 | 9 | 0 | 0 | 51 | 51 | 0 |

| WebHostingPad | 0 | 5 | 0 | 0 | 52 | 52 | 0 |

| HostRocket | 0 | 2 | 0 | 0 | 53 | 53 | 0 |

| Superb Internet | 0 | 1 | 0 | 0 | 54 | 54 | 0 |

You will notice the biggest differences are companies with more reviews generally moving up in rank, small sample sizes move down. Because the sample sizes are so small on some companies, you can see their % rating drops dramatically. But since most companies don't have a lot of data, it doesn't influence the rankings as much.

Conclusion

It's been nice having HostingReviews.io around when it was actively being updated (the manual process is certainly overwhelming for any individual I think!). I will miss having a real competitor to compare what I'm seeing in my data. I don't know the new owners, but I do consider Steven, the creator, a friend and wish him the best of luck going forward while he works on his primary business, ChurchThemes.com. It saddens me to see the new owners ruining his work with what looks like another mediocre affiliate review site pushing some of the highest paying companies in the space. But it's yet another unfortunate reminder of why I'm so disappointed by the web hosting review industry.

Sources: All data was pulled from Archive.org.

http://web.archive.org/web/20150113073121/http://hostingreviews.io/

http://web.archive.org/web/20150130063013/https://reviewsignal.com/webhosting/compare/

HostingAdvice.com Steals Review Signal’s Content and Uses it to Mislead Visitors

This was originally written on July 7, 2015. The screenshots are mostly from that period using archive.org. The site has changed (no longer has a Top 10 that I see, but still misuses Review Signal in the exact same way). I was hesitant to bash competitors, but I decided I don't care, they are the ones behaving badly, I will call them out on it.

This is Episode 2 of Dirty Slimy Shady Secrets of the Web Hosting Review World

I've long hated the fake review sites that plague the web hosting review business. But it just became even more personal. HostingAdvice.com decided to take reviews from Review Signal, edit them and selectively use them to promote companies with very poor ratings.

Let's take a look at what is happening at HostingAdvice.com (This links to archive of their site in case they change it and I don't want them getting any benefit for the BS they are pulling).

They claim to be an expert and say everyone sucks. They are calling everyone else spammy and unreliable. It's hard to argue with the sentiment considering I have the same stance here.

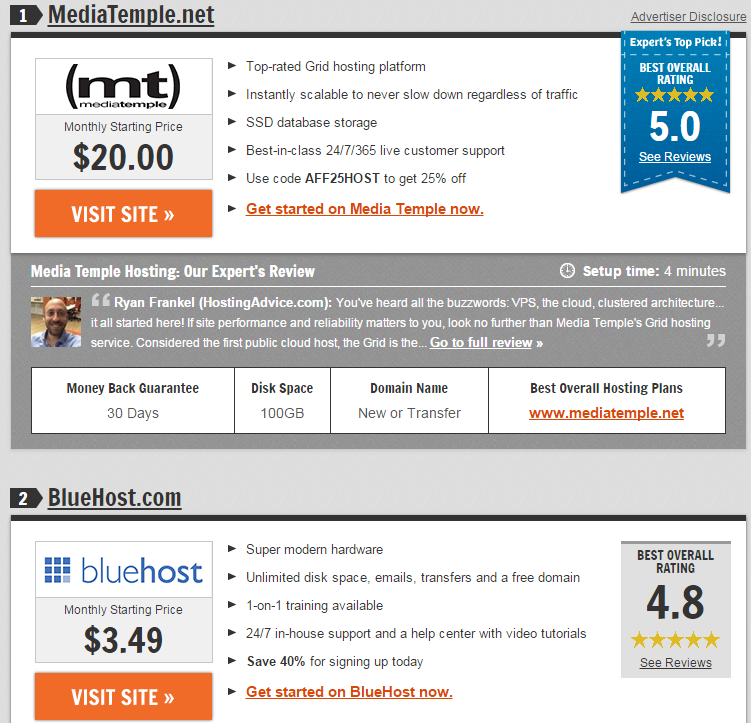

But let's take a look at their Top Hosts in 2015

Media Temple as number one, not the most abusive ranking I've seen. They don't have the best reviews here, but they are 58% (56% as of Jan 2017), which is 2nd tierish, at least more than half their customers are saying good things. BlueHost is #2? That's just nonsense. They have a 47% (40% as of Jan 2017) which means less than half their users are saying good things about them.

BUT WAIT, THERE'S MORE!

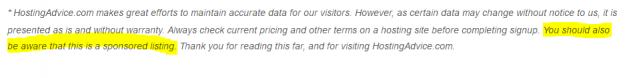

Remember that Highfalutin rhetoric about them being different and not spammy/unreliable? How could you possible need a disclosure like that if it were true? That's right, you're just like every other crap web hosting review site out there trying to pimp the highest paying affiliate program on unsuspecting visitors.

If that wasn't enough, there's always the coup de grâce:

Things are starting to make sense. But none of this has gotten personal yet.

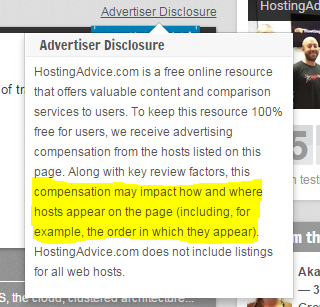

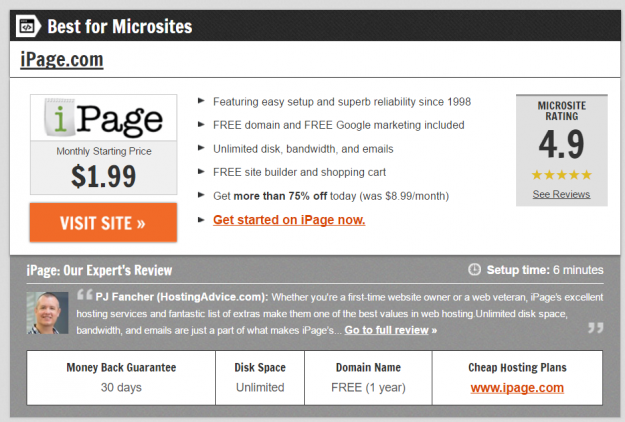

So I took a look at the #3 Ranked iPage and to my absolute delight found this under 'Customer Reviews'

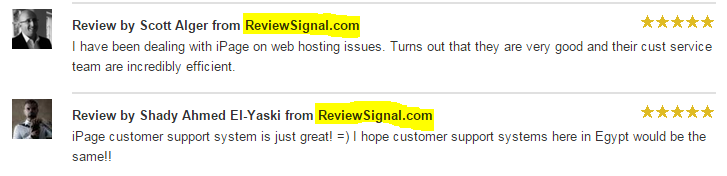

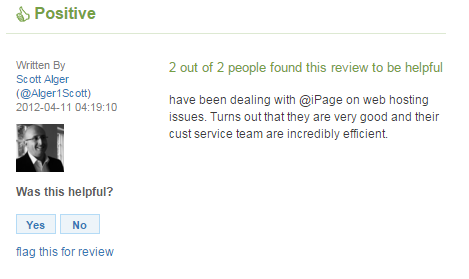

Yes, those are the two highest rated positive comments about iPage on Review Signal.

Yes, those are the two highest rated positive comments about iPage on Review Signal.

Except they've been given 5 stars which isn't something we do here. Also, they've edited this review without indicating they changed it (adding 'I'), which tells me they did this by hand and not scraping.

So that five star rating is made up. How made up?

So made up that this stolen review was given four stars. They are simply adding their own narrative and judgement to Review Signal's data.

At Review Signal, we only categorize as positive or negative.

Why does this matter and why is this so personal?

This matters because they were conscious enough of Review Signal to steal its content. They were also conscious enough to cherry pick the data they wanted to push the highest paying affiliates and ignored the fact they are selling out to some of the lowest rated companies around. They have JustHost listed as #9 (like many Fake review sites have in their top lists) when every indication shows that they have a terrible reputation. One of the absolute lowest on this site at 39% ( 31% as of Jan 2017) or you can look at the 21% on a no-affiliate link site that uses a similar methodology to Review Signal (now down to 7% as of Jan 2017).

2017 Update: iPage is still listed as 5 Stars with a 4.9/5 Rating as one of their best hosts in 2017.

Finally, what made this so personal is they are using the Review Signal brand to mislead consumers. This site was built to help consumers in a space filled with charlatans and it is painful to watch the brand be used by one of them to enhance their bottom line.

If you're not familiar with Review Signal, I suggest start by looking at our full dataset. Alternatively, you can read about how it works where our entire methodology is detailed including the algorithms used to generate our ratings. The gist of it is we use twitter data to listen to what good and bad things are saying about web hosting companies and publish the results. We validate our method using the few limited available metrics like NPS scores when given the opportunity.

A2, CloudWays, Heart Internet, HostPapa, OVH, Pantheon, ScaleWay and TsoHost Added to Review Signal

Happy to announce a lot of new additions to Review Signal including our first UK companies (HeartInternet and TsoHost). UK companies are displayed with a UK flag in search results and on the company pages.

Overall score is in parentheses after the company.

A2 Hosting (49%)

CloudWays (65%)

HeartInternet (28%)

HostPapa (27%)

OVH (38%)

Pantheon (77%)

ScaleWay (62%)

Tsohost (70%)

Review Signal’s Best Web Hosting Companies in 2016

2016 Year in Review

I like to take this opportunity to look back at the year and see how Review Signal has changed. This past year we added ~36,000 new reviews. Added one new company: WebFaction. 49.6% of reviews were positive overall. 52.1% of unique reviews were positive. What is interesting about the difference is that people with negative things to say were more likely to send multiple negative messages, but as a whole more individual people said positive things than negative.

This year was also full of interesting articles that took advantage of our unique position in the web hosting review space. The WordPress Hosting Performance Benchmarks (2016) was the biggest hit as usual. It grew massively in size/scope and tested companies across multiple price tiers up to Enterprise WordPress Hosting.

I also wrote about the Dirty, Slimy Secrets of the Web Hosting Review Underworld. I also tracked some major changes with The Rise and Fall of A Small Orange and The Sinking of Site 5 which tracked Endurance International Group acquisitions and how their ratings fell post-acquisition. A Small Orange's fall from grace even caused the first ranking algorithm update on Review Signal's history.

Best Shared Hosting 2016 – SiteGround [Reviews] (74.2%)

Best Specialty Hosting 2016 – FlyWheel [Reviews] (83.7%)

Best Managed VPS Hosting 2016 – KnownHost [Reviews] (80.9%)

Best Unmanaged VPS Hosting 2016 – Digital Ocean [Reviews] (71.3%)

Best Support 2016 – SiteGround [Reviews] (80.81%). KnownHost [Reviews], LiquidWeb [Reviews], WiredTree [Reviews] all tied for second at 80% (WiredTree was acquired by LiquidWeb in 2016).

A big congratulations goes out to all of this years winners.

WordPress & WooCommerce Hosting Performance Benchmarks 2021

WordPress & WooCommerce Hosting Performance Benchmarks 2021 WooCommerce Hosting Performance Benchmarks 2020

WooCommerce Hosting Performance Benchmarks 2020 WordPress Hosting Performance Benchmarks (2020)

WordPress Hosting Performance Benchmarks (2020) The Case for Regulatory Capture at ICANN

The Case for Regulatory Capture at ICANN WordPress Hosting – Does Price Give Better Performance?

WordPress Hosting – Does Price Give Better Performance? Hostinger Review – 0 Stars for Lack of Ethics

Hostinger Review – 0 Stars for Lack of Ethics The Sinking of Site5 – Tracking EIG Brands Post Acquisition

The Sinking of Site5 – Tracking EIG Brands Post Acquisition Dirty, Slimy, Shady Secrets of the Web Hosting Review (Under)World – Episode 1

Dirty, Slimy, Shady Secrets of the Web Hosting Review (Under)World – Episode 1 Free Web Hosting Offers for Startups

Free Web Hosting Offers for Startups